Thank you for your patience!

- Software Developers: Write, refactor, and debug code faster with AI-assisted development.

- AI Engineers & Researchers: Experiment with advanced reasoning and model capabilities for technical use cases.

- Startups & Tech Founders: Speed up MVP development and reduce engineering workload.

- DevOps & Platform Teams: Improve automation scripts and infrastructure-related code.

- Enterprises: Integrate powerful AI models into internal tools and developer platforms.

How to Use Devstral 2?

- Access the Model: Use Devstral 2 through Mistral AI’s platform or supported APIs.

- Choose Your Use Case: Apply it for coding, reasoning, debugging, or technical content generation.

- Generate AI-Assisted Output: Produce high-quality code, explanations, or structured solutions.

- Test & Refine: Review outputs and iterate to ensure correctness and performance.

- Deploy & Scale: Integrate Devstral 2 into applications, workflows, or developer tools.

- Developer-Optimized AI Model: Specifically designed for coding and technical reasoning tasks.

- Strong Code Understanding: Handles complex programming logic and multi-step reasoning.

- Efficient Performance: Balances speed and accuracy for real-world development needs.

- Scalable Integration: Suitable for both small projects and large enterprise systems.

- Built by Mistral AI: Backed by a research-driven AI company focused on open and efficient models.

- Developer-Centric Design: Tailored for software development and technical workflows.

- High-Quality Code Generation: Produces clean, structured, and useful code outputs.

- Flexible Integration Options: Works well with APIs and custom developer environments.

- Strong Reasoning Capabilities: Effective for debugging and problem-solving tasks.

- Requires Technical Knowledge: Best suited for users with development experience.

- May Need Human Review: Outputs should be validated for production-critical systems.

- Advanced Usage May Require Setup: Integration and customization can take time.

Free

Free

Chat. Search. Learn. Code. Create.

Access to Mistral’s SOTA AI models.

Save and recall up to 500 memories.

Group chats into projects.

Full access to Connectors directory.

Pro

$ 14.99/mo

More messages and web searches.

30x more extended thinking.

5x more Deep Research reports.

Up to 15GB of document storage.

Unlimited projects.

Chat support.

Team

$24.99/mo

Up to 200 flash answers /user/day.

Up to 30GB of storage /user.

Domain name verification.

Data export.

Proud of the love you're getting? Show off your AI Toolbook reviews—then invite more fans to share the love and build your credibility.

Add an AI Toolbook badge to your site—an easy way to drive followers, showcase updates, and collect reviews. It's like a mini 24/7 billboard for your AI.

Reviews

Rating Distribution

Average score

Popular Mention

FAQs

Similar AI Tools

grok-2-1212

Grok 2 – 1212 is xAI’s enhanced version of Grok 2, released December 12, 2024. It’s designed to be faster—up to 3× speed boost—with sharper accuracy, improved instruction-following, and stronger multilingual support. It includes web search, citations, and the Aurora image-generation feature. Now available to all users on X, with Premium tiers getting higher usage limits.

grok-2-1212

Grok 2 – 1212 is xAI’s enhanced version of Grok 2, released December 12, 2024. It’s designed to be faster—up to 3× speed boost—with sharper accuracy, improved instruction-following, and stronger multilingual support. It includes web search, citations, and the Aurora image-generation feature. Now available to all users on X, with Premium tiers getting higher usage limits.

grok-2-1212

Grok 2 – 1212 is xAI’s enhanced version of Grok 2, released December 12, 2024. It’s designed to be faster—up to 3× speed boost—with sharper accuracy, improved instruction-following, and stronger multilingual support. It includes web search, citations, and the Aurora image-generation feature. Now available to all users on X, with Premium tiers getting higher usage limits.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Mistral Saba

Mistral Saba is a 24 billion‑parameter regional language model launched by Mistral AI on February 17, 2025. Designed for native fluency in Arabic and South Asian languages (like Tamil, Malayalam, and Urdu), it delivers culturally-aware responses on single‑GPU systems—faster and more precise than much larger general models.

Mistral Saba

Mistral Saba is a 24 billion‑parameter regional language model launched by Mistral AI on February 17, 2025. Designed for native fluency in Arabic and South Asian languages (like Tamil, Malayalam, and Urdu), it delivers culturally-aware responses on single‑GPU systems—faster and more precise than much larger general models.

Mistral Saba

Mistral Saba is a 24 billion‑parameter regional language model launched by Mistral AI on February 17, 2025. Designed for native fluency in Arabic and South Asian languages (like Tamil, Malayalam, and Urdu), it delivers culturally-aware responses on single‑GPU systems—faster and more precise than much larger general models.

Mistral Document AI is Mistral AI’s enterprise-grade document processing platform, launched May 2025. It combines state-of-the-art OCR model mistral-ocr-latest with structured data extraction, document Q&A, and natural language understanding—delivering 99%+ OCR accuracy, support for over 40 languages and complex layouts (tables, forms, handwriting), and blazing-fast processing at up to 2,000 pages/min per GPU.

Mistral Document A..

Mistral Document AI is Mistral AI’s enterprise-grade document processing platform, launched May 2025. It combines state-of-the-art OCR model mistral-ocr-latest with structured data extraction, document Q&A, and natural language understanding—delivering 99%+ OCR accuracy, support for over 40 languages and complex layouts (tables, forms, handwriting), and blazing-fast processing at up to 2,000 pages/min per GPU.

Mistral Document A..

Mistral Document AI is Mistral AI’s enterprise-grade document processing platform, launched May 2025. It combines state-of-the-art OCR model mistral-ocr-latest with structured data extraction, document Q&A, and natural language understanding—delivering 99%+ OCR accuracy, support for over 40 languages and complex layouts (tables, forms, handwriting), and blazing-fast processing at up to 2,000 pages/min per GPU.

Mistral Small 3.1

Mistral Small 3.1 is the March 17, 2025 update to Mistral AI's open-source 24B-parameter small model. It offers instruction-following, multimodal vision understanding, and an expanded 128K-token context window, delivering performance on par with or better than GPT‑4o Mini, Gemma 3, and Claude 3.5 Haiku—all while maintaining fast inference speeds (~150 tokens/sec) and running on devices like an RTX 4090 or a 32 GB Mac.

Mistral Small 3.1

Mistral Small 3.1 is the March 17, 2025 update to Mistral AI's open-source 24B-parameter small model. It offers instruction-following, multimodal vision understanding, and an expanded 128K-token context window, delivering performance on par with or better than GPT‑4o Mini, Gemma 3, and Claude 3.5 Haiku—all while maintaining fast inference speeds (~150 tokens/sec) and running on devices like an RTX 4090 or a 32 GB Mac.

Mistral Small 3.1

Mistral Small 3.1 is the March 17, 2025 update to Mistral AI's open-source 24B-parameter small model. It offers instruction-following, multimodal vision understanding, and an expanded 128K-token context window, delivering performance on par with or better than GPT‑4o Mini, Gemma 3, and Claude 3.5 Haiku—all while maintaining fast inference speeds (~150 tokens/sec) and running on devices like an RTX 4090 or a 32 GB Mac.

Mistral Nemotron

Mistral Nemotron is a preview large language model, jointly developed by Mistral AI and NVIDIA, released on June 11, 2025. Optimized by NVIDIA for inference using TensorRT-LLM and vLLM, it supports a massive 128K-token context window and is built for agentic workflows—excelling in instruction-following, function calling, and code generation—while delivering state-of-the-art performance across reasoning, math, coding, and multilingual benchmarks.

Mistral Nemotron

Mistral Nemotron is a preview large language model, jointly developed by Mistral AI and NVIDIA, released on June 11, 2025. Optimized by NVIDIA for inference using TensorRT-LLM and vLLM, it supports a massive 128K-token context window and is built for agentic workflows—excelling in instruction-following, function calling, and code generation—while delivering state-of-the-art performance across reasoning, math, coding, and multilingual benchmarks.

Mistral Nemotron

Mistral Nemotron is a preview large language model, jointly developed by Mistral AI and NVIDIA, released on June 11, 2025. Optimized by NVIDIA for inference using TensorRT-LLM and vLLM, it supports a massive 128K-token context window and is built for agentic workflows—excelling in instruction-following, function calling, and code generation—while delivering state-of-the-art performance across reasoning, math, coding, and multilingual benchmarks.

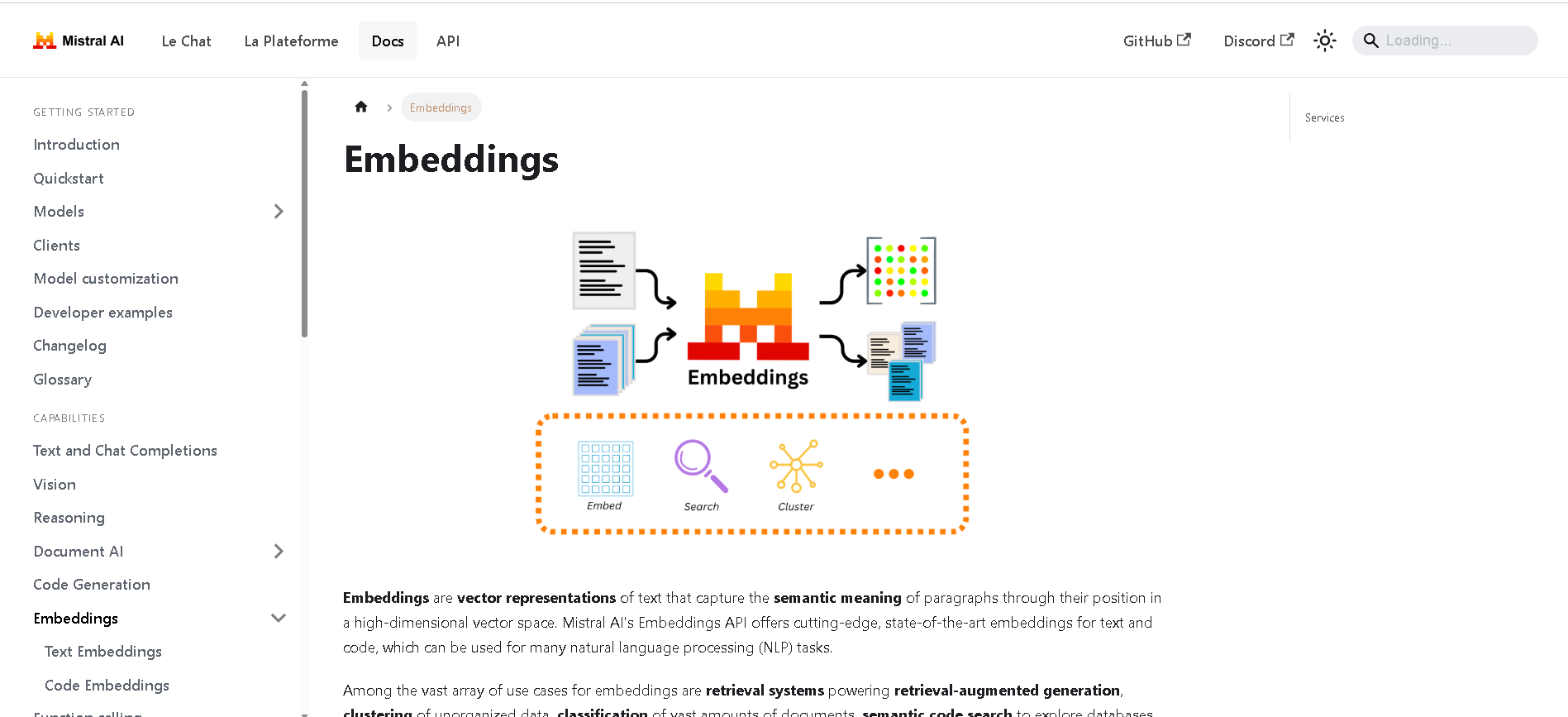

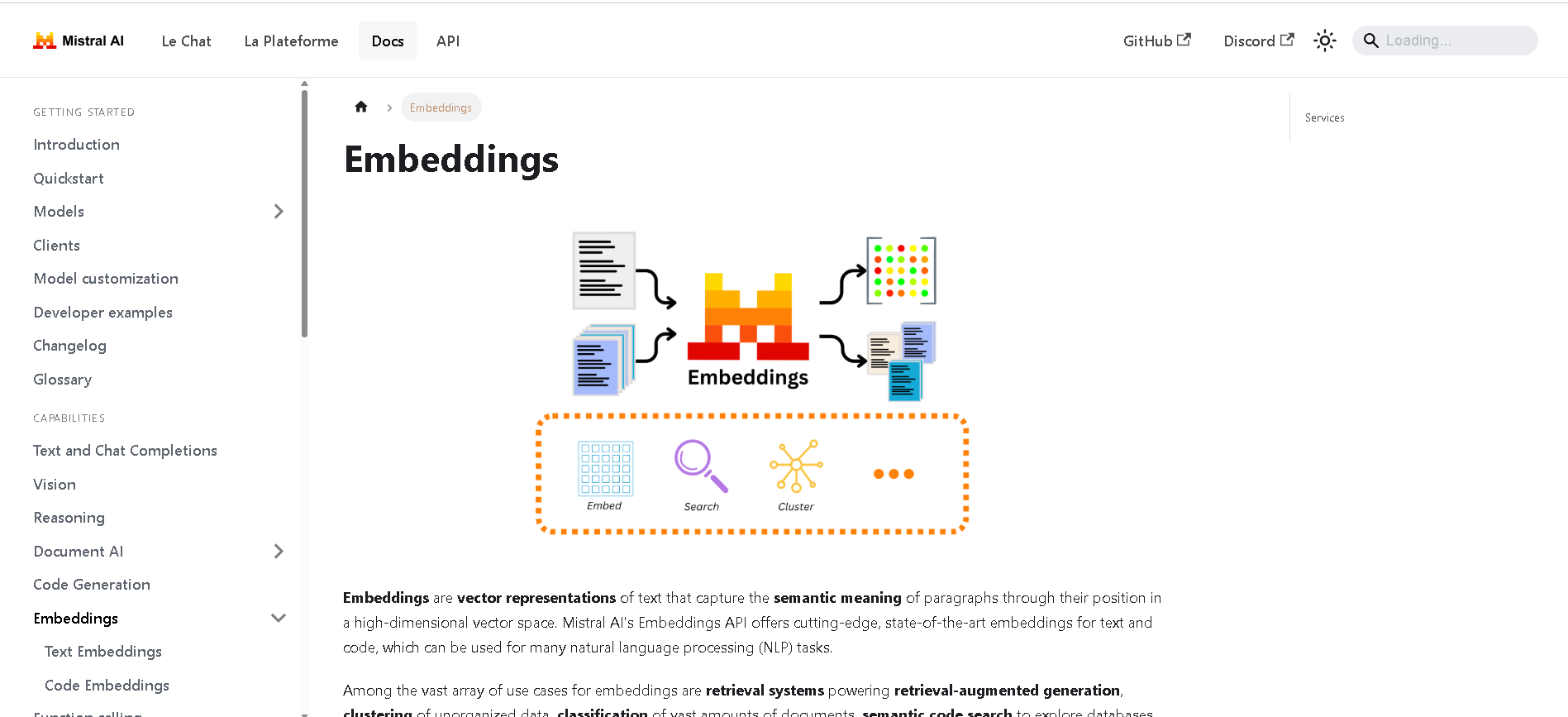

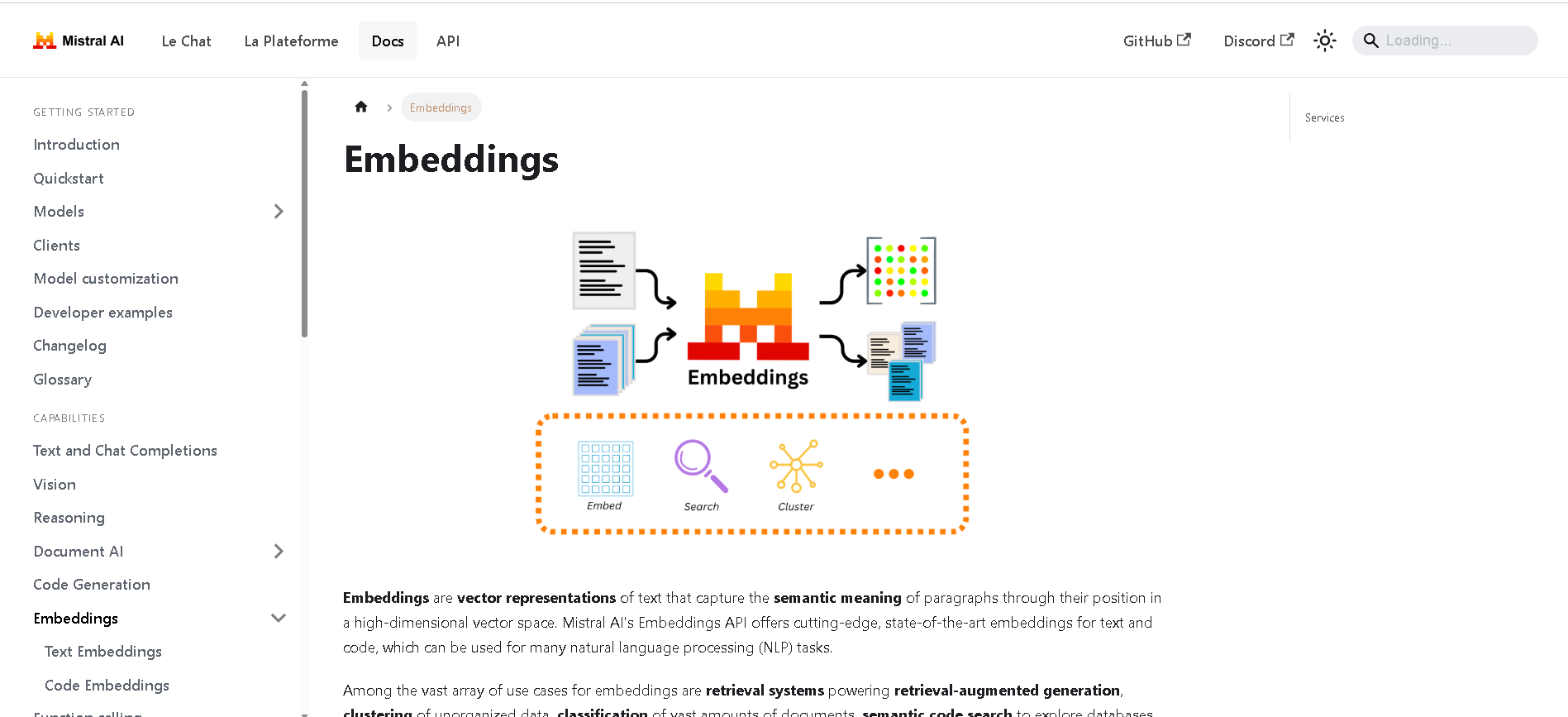

Mistral Embed

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Embed

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Embed

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Mistral Moderation..

Mistral Moderation API is a content moderation service released in November 2024, powered by a fine-tuned version of Mistral’s Ministral 8B model. It classifies text across nine safety categories—sexual content, hate/discrimination, violence/threats, dangerous/criminal instructions, self‑harm, health, financial, legal, and personally identifiable information (PII). It offers two endpoints: one for raw text and one optimized for conversational content.

Mistral Moderation..

Mistral Moderation API is a content moderation service released in November 2024, powered by a fine-tuned version of Mistral’s Ministral 8B model. It classifies text across nine safety categories—sexual content, hate/discrimination, violence/threats, dangerous/criminal instructions, self‑harm, health, financial, legal, and personally identifiable information (PII). It offers two endpoints: one for raw text and one optimized for conversational content.

Mistral Moderation..

Mistral Moderation API is a content moderation service released in November 2024, powered by a fine-tuned version of Mistral’s Ministral 8B model. It classifies text across nine safety categories—sexual content, hate/discrimination, violence/threats, dangerous/criminal instructions, self‑harm, health, financial, legal, and personally identifiable information (PII). It offers two endpoints: one for raw text and one optimized for conversational content.

Blitzy

Blitzy is an AI-powered autonomous software development platform designed to accelerate enterprise-grade software creation. It automates over 80% of the development process, enabling teams to transform six-month projects into six-day turnarounds. Blitzy utilizes a multi-agent System 2 AI architecture to reason deeply across entire codebases, providing high-quality, production-ready code validated at both compile and runtime.

Blitzy

Blitzy is an AI-powered autonomous software development platform designed to accelerate enterprise-grade software creation. It automates over 80% of the development process, enabling teams to transform six-month projects into six-day turnarounds. Blitzy utilizes a multi-agent System 2 AI architecture to reason deeply across entire codebases, providing high-quality, production-ready code validated at both compile and runtime.

Blitzy

Blitzy is an AI-powered autonomous software development platform designed to accelerate enterprise-grade software creation. It automates over 80% of the development process, enabling teams to transform six-month projects into six-day turnarounds. Blitzy utilizes a multi-agent System 2 AI architecture to reason deeply across entire codebases, providing high-quality, production-ready code validated at both compile and runtime.

Dotlane

Dotlane is an all-in-one AI assistant platform that brings together multiple leading AI models under a single, user-friendly interface. Instead of subscribing to or switching between different providers, users can access models from OpenAI, Anthropic, Grok, Mistral, Deepseek, and others in one place. It offers a wide range of features including advanced chat, file understanding and summarization, real-time search, and image generation. Dotlane’s mission is to make powerful AI accessible, fair, and transparent for individuals and teams alike.

Dotlane

Dotlane is an all-in-one AI assistant platform that brings together multiple leading AI models under a single, user-friendly interface. Instead of subscribing to or switching between different providers, users can access models from OpenAI, Anthropic, Grok, Mistral, Deepseek, and others in one place. It offers a wide range of features including advanced chat, file understanding and summarization, real-time search, and image generation. Dotlane’s mission is to make powerful AI accessible, fair, and transparent for individuals and teams alike.

Dotlane

Dotlane is an all-in-one AI assistant platform that brings together multiple leading AI models under a single, user-friendly interface. Instead of subscribing to or switching between different providers, users can access models from OpenAI, Anthropic, Grok, Mistral, Deepseek, and others in one place. It offers a wide range of features including advanced chat, file understanding and summarization, real-time search, and image generation. Dotlane’s mission is to make powerful AI accessible, fair, and transparent for individuals and teams alike.

Unsloth AI

Unsloth.AI is an open-source platform designed to accelerate and simplify the fine-tuning of large language models (LLMs). By leveraging manual mathematical derivations, custom GPU kernels, and efficient optimization techniques, Unsloth achieves up to 30x faster training speeds compared to traditional methods, without compromising model accuracy. It supports a wide range of popular models, including Llama, Mistral, Gemma, and BERT, and works seamlessly on various GPUs, from consumer-grade Tesla T4 to high-end H100, as well as AMD and Intel GPUs. Unsloth empowers developers, researchers, and AI enthusiasts to fine-tune models efficiently, even with limited computational resources, democratizing access to advanced AI model customization. With a focus on performance, scalability, and flexibility, Unsloth.AI is suitable for both academic research and commercial applications, helping users deploy specialized AI solutions faster and more effectively.

Unsloth AI

Unsloth.AI is an open-source platform designed to accelerate and simplify the fine-tuning of large language models (LLMs). By leveraging manual mathematical derivations, custom GPU kernels, and efficient optimization techniques, Unsloth achieves up to 30x faster training speeds compared to traditional methods, without compromising model accuracy. It supports a wide range of popular models, including Llama, Mistral, Gemma, and BERT, and works seamlessly on various GPUs, from consumer-grade Tesla T4 to high-end H100, as well as AMD and Intel GPUs. Unsloth empowers developers, researchers, and AI enthusiasts to fine-tune models efficiently, even with limited computational resources, democratizing access to advanced AI model customization. With a focus on performance, scalability, and flexibility, Unsloth.AI is suitable for both academic research and commercial applications, helping users deploy specialized AI solutions faster and more effectively.

Unsloth AI

Unsloth.AI is an open-source platform designed to accelerate and simplify the fine-tuning of large language models (LLMs). By leveraging manual mathematical derivations, custom GPU kernels, and efficient optimization techniques, Unsloth achieves up to 30x faster training speeds compared to traditional methods, without compromising model accuracy. It supports a wide range of popular models, including Llama, Mistral, Gemma, and BERT, and works seamlessly on various GPUs, from consumer-grade Tesla T4 to high-end H100, as well as AMD and Intel GPUs. Unsloth empowers developers, researchers, and AI enthusiasts to fine-tune models efficiently, even with limited computational resources, democratizing access to advanced AI model customization. With a focus on performance, scalability, and flexibility, Unsloth.AI is suitable for both academic research and commercial applications, helping users deploy specialized AI solutions faster and more effectively.

Editorial Note

This page was researched and written by the ATB Editorial Team. Our team researches each AI tool by reviewing its official website, testing features, exploring real use cases, and considering user feedback. Every page is fact-checked and regularly updated to ensure the information stays accurate, neutral, and useful for our readers.

If you have any suggestions or questions, email us at hello@aitoolbook.ai