- Enterprises & Startups: Deploy virtual assistants, chatbots, and content generators tailored to Middle Eastern and South Asian markets.

- Developers & Engineers: Access API and local deployment to build region-specific applications with low latency.

- Content Creators & Educators: Generate culturally relevant educational, marketing, or social content with high regional resonance.

- Healthcare & Finance Teams: Leverage fine-tuned accuracy in domain-specific Arabic and regional dialects.

- Privacy-Sensitive Organizations: Run entirely on-premises, keeping data within local jurisdictions.

How to Use Mistral Saba?

- Access via API or Locally: Available through Mistral API (`mistral-saba-2502`) or deployable behind secure infrastructure.

- Send Region-Specific Prompts: Craft prompts in Arabic, Tamil, Malayalam, Urdu, etc., for conversational or instructional outputs.

- Fine-Tune for Specializations: Adapt for sectors like finance, healthcare, or energy using additional proprietary datasets.

- Deploy on Single-GPU: Runs at 150+ tokens/second, with low latency and cost on a single card.

- Regional Fluency: Outperforms larger models like LLaMA 3.1 (70B) and Jais (70B) in Arabic and other local benchmarks (MMLU, TyDiQA, Alghafa).

- Cultural Awareness: Trained on carefully curated Middle Eastern and South Asian data, capturing dialectal nuance.

- Compact & Efficient: Smaller size enables single-GPU deployment with 150+ TPS—ideal for cost-effective use.

- Fluent in Arabic and South Asian languages with regional nuance

- High performance with cultural and domain accuracy

- Deployable on-prem or via API, respecting data sovereignty

- Fast token throughput on modest hardware

- Ideal base for fine-tuning and enterprise adaptation

- Model weights not open-sourced—transparency concerns raised by the community

- Currently supports only select languages; expansion planned

- Benchmarks from early adopters still limited in variety

API

$0.2/$0.6 per 1M tokens

$0.2 per 1M input tokens

$0.6 per 1M output tokens

Proud of the love you're getting? Show off your AI Toolbook reviews—then invite more fans to share the love and build your credibility.

Add an AI Toolbook badge to your site—an easy way to drive followers, showcase updates, and collect reviews. It's like a mini 24/7 billboard for your AI.

Reviews

Rating Distribution

Average score

Popular Mention

FAQs

Similar AI Tools

KIMI

Kimi.ai is an advanced AI chatbot developed by Beijing-based company Moonshot AI. Launched in October 2023, Kimi has rapidly gained popularity for its exceptional capacity to process extensive textual inputs and its multimodal capabilities. It is engineered to adapt to the specific needs of its users, whether in corporate environments or personal settings, facilitating smoother, more efficient operations.

KIMI

Kimi.ai is an advanced AI chatbot developed by Beijing-based company Moonshot AI. Launched in October 2023, Kimi has rapidly gained popularity for its exceptional capacity to process extensive textual inputs and its multimodal capabilities. It is engineered to adapt to the specific needs of its users, whether in corporate environments or personal settings, facilitating smoother, more efficient operations.

KIMI

Kimi.ai is an advanced AI chatbot developed by Beijing-based company Moonshot AI. Launched in October 2023, Kimi has rapidly gained popularity for its exceptional capacity to process extensive textual inputs and its multimodal capabilities. It is engineered to adapt to the specific needs of its users, whether in corporate environments or personal settings, facilitating smoother, more efficient operations.

Odia Gen AI

OdiaGenAI is a collaborative open-source initiative launched in 2023 to develop generative AI and LLM technologies tailored for Odia—a low-resource Indic language—and other regional languages. Led by Odia technologists and hosted under Odisha AI, it focuses on building pretrained, fine-tuned, and instruction-following models, datasets, and tools to empower areas like education, governance, agriculture, tourism, health, and industry.

Odia Gen AI

OdiaGenAI is a collaborative open-source initiative launched in 2023 to develop generative AI and LLM technologies tailored for Odia—a low-resource Indic language—and other regional languages. Led by Odia technologists and hosted under Odisha AI, it focuses on building pretrained, fine-tuned, and instruction-following models, datasets, and tools to empower areas like education, governance, agriculture, tourism, health, and industry.

Odia Gen AI

OdiaGenAI is a collaborative open-source initiative launched in 2023 to develop generative AI and LLM technologies tailored for Odia—a low-resource Indic language—and other regional languages. Led by Odia technologists and hosted under Odisha AI, it focuses on building pretrained, fine-tuned, and instruction-following models, datasets, and tools to empower areas like education, governance, agriculture, tourism, health, and industry.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Mistral Magistral

Magistral is Mistral AI’s first dedicated reasoning model, released on June 10, 2025, available in two versions: open-source 24 B Magistral Small and enterprise-grade Magistral Medium. It’s built to provide transparent, multilingual, domain-specific chain-of-thought reasoning, excelling in step-by-step logic tasks like math, finance, legal, and engineering.

Codestral 25.01 is Mistral AI’s upgraded code-generation model, released January 13, 2025. Featuring a more efficient architecture and improved tokenizer, it delivers code completion and intelligence about 2× faster than its predecessor, with support for fill-in-the-middle (FIM), code correction, test generation, and proficiency in over 80 programming languages, all within a 256K-token context window.

Mistral Codestral ..

Codestral 25.01 is Mistral AI’s upgraded code-generation model, released January 13, 2025. Featuring a more efficient architecture and improved tokenizer, it delivers code completion and intelligence about 2× faster than its predecessor, with support for fill-in-the-middle (FIM), code correction, test generation, and proficiency in over 80 programming languages, all within a 256K-token context window.

Mistral Codestral ..

Codestral 25.01 is Mistral AI’s upgraded code-generation model, released January 13, 2025. Featuring a more efficient architecture and improved tokenizer, it delivers code completion and intelligence about 2× faster than its predecessor, with support for fill-in-the-middle (FIM), code correction, test generation, and proficiency in over 80 programming languages, all within a 256K-token context window.

Mistral Embed

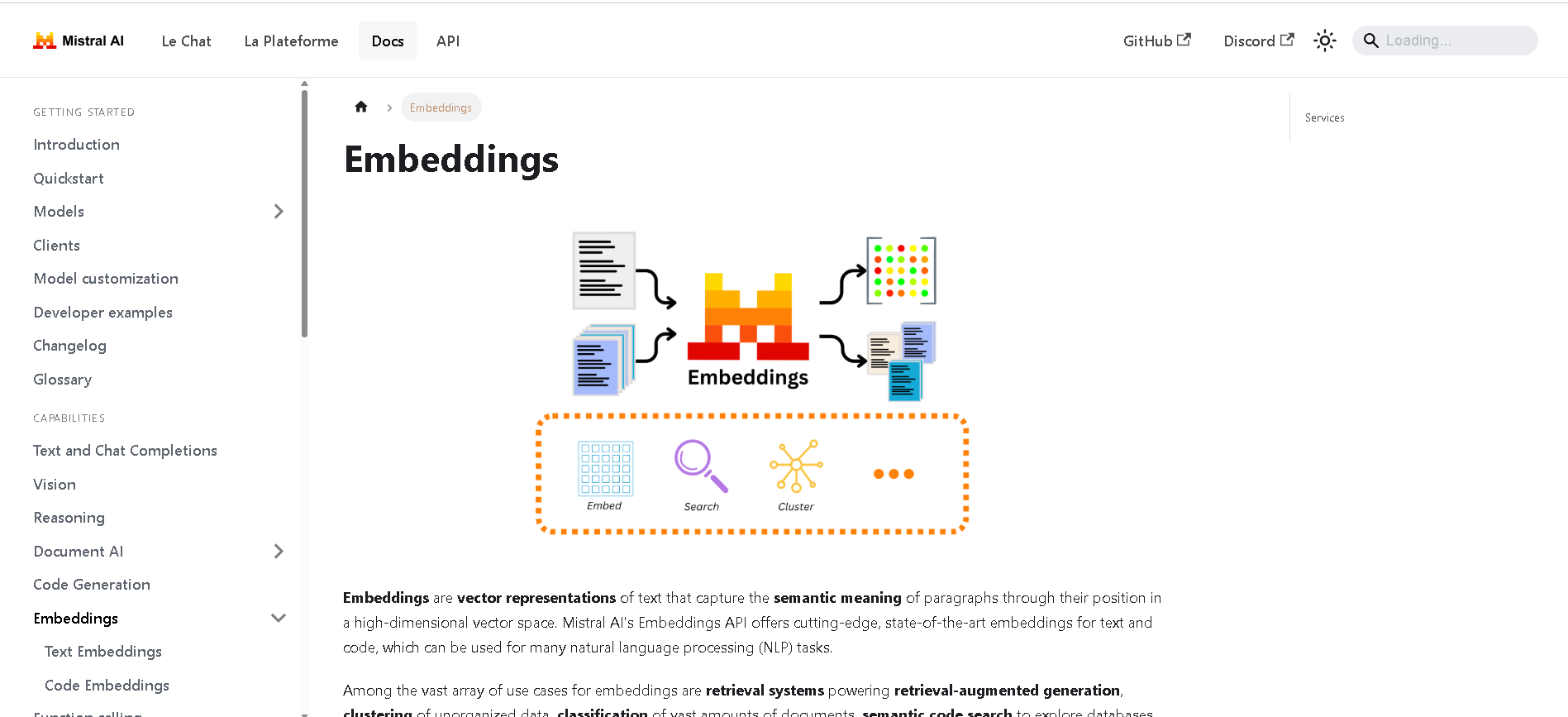

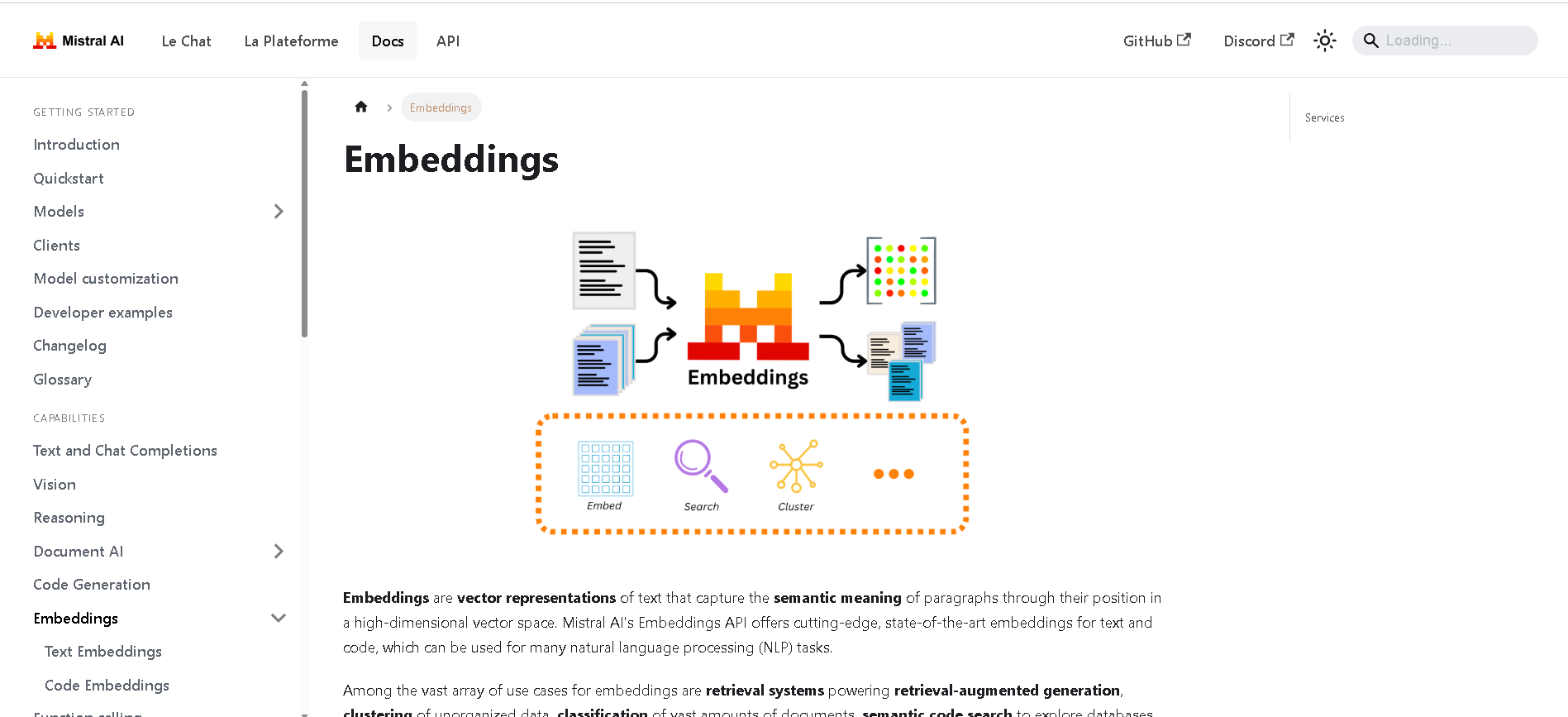

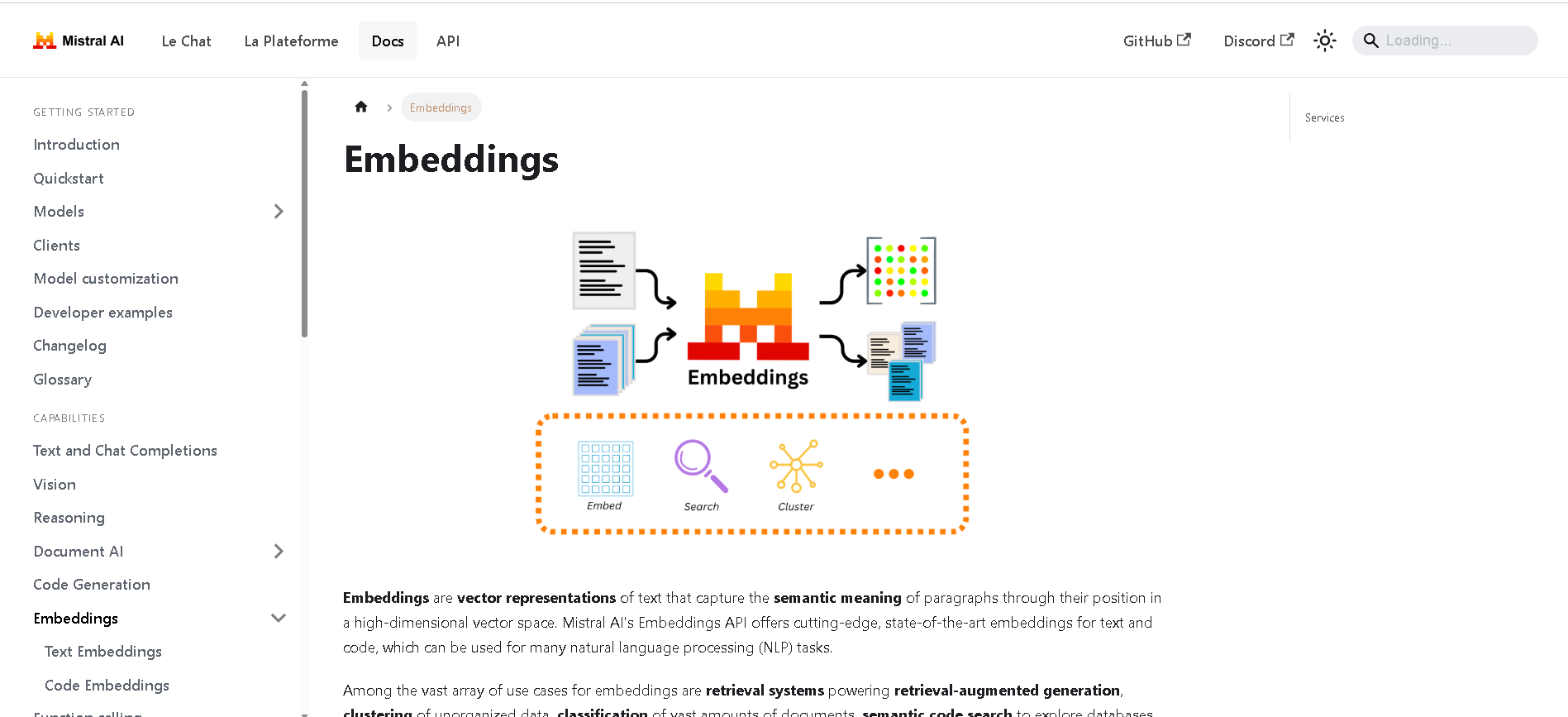

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Embed

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Embed

Mistral Embed is Mistral AI’s high-performance text embedding model designed for semantic retrieval, clustering, classification, and retrieval-augmented generation (RAG). With support for up to 8,192 tokens and producing 1,024-dimensional vectors, it delivers state-of-the-art semantic similarity and organization capabilities.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Mistral Pixtral La..

Pixtral Large is Mistral AI’s latest multimodal powerhouse, launched November 18, 2024. Built atop the 123B‑parameter Mistral Large 2, it features a 124B‑parameter multimodal decoder paired with a 1B‑parameter vision encoder, and supports a massive 128K‑token context window—enabling it to process up to 30 high-resolution images or ~300-page documents.

Boundary AI

BoundaryML.com introduces BAML, an expressive language specifically designed for structured text generation with Large Language Models (LLMs). Its primary purpose is to simplify and enhance the process of obtaining structured data (like JSON) from LLMs, moving beyond the challenges of traditional methods by providing robust parsing, error correction, and reliable function-calling capabilities.

Boundary AI

BoundaryML.com introduces BAML, an expressive language specifically designed for structured text generation with Large Language Models (LLMs). Its primary purpose is to simplify and enhance the process of obtaining structured data (like JSON) from LLMs, moving beyond the challenges of traditional methods by providing robust parsing, error correction, and reliable function-calling capabilities.

Boundary AI

BoundaryML.com introduces BAML, an expressive language specifically designed for structured text generation with Large Language Models (LLMs). Its primary purpose is to simplify and enhance the process of obtaining structured data (like JSON) from LLMs, moving beyond the challenges of traditional methods by providing robust parsing, error correction, and reliable function-calling capabilities.

LM Studio

LM Studio is a local AI toolkit that empowers users to discover, download, and run Large Language Models (LLMs) directly on their personal computers. It provides a user-friendly interface to chat with models, set up a local LLM server for applications, and ensures complete data privacy as all processes occur locally on your machine.

LM Studio

LM Studio is a local AI toolkit that empowers users to discover, download, and run Large Language Models (LLMs) directly on their personal computers. It provides a user-friendly interface to chat with models, set up a local LLM server for applications, and ensures complete data privacy as all processes occur locally on your machine.

LM Studio

LM Studio is a local AI toolkit that empowers users to discover, download, and run Large Language Models (LLMs) directly on their personal computers. It provides a user-friendly interface to chat with models, set up a local LLM server for applications, and ensures complete data privacy as all processes occur locally on your machine.

TavonnAI

TavonnAI is an open-source AI platform offering access to 30-plus large language models (LLMs) that let you do chat, image generation, and create animated GIFs or visuals. For a fixed monthly subscription, users get “unlimited” usage—that means fewer restrictions, more freedom to experiment, and broad creative potential.

TavonnAI

TavonnAI is an open-source AI platform offering access to 30-plus large language models (LLMs) that let you do chat, image generation, and create animated GIFs or visuals. For a fixed monthly subscription, users get “unlimited” usage—that means fewer restrictions, more freedom to experiment, and broad creative potential.

TavonnAI

TavonnAI is an open-source AI platform offering access to 30-plus large language models (LLMs) that let you do chat, image generation, and create animated GIFs or visuals. For a fixed monthly subscription, users get “unlimited” usage—that means fewer restrictions, more freedom to experiment, and broad creative potential.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

Ask Any Model

AskAnyModel is a unified AI interface that allows users to interact with multiple leading AI models — such as GPT, Claude, Gemini, and Mistral — from a single platform. It eliminates the need for multiple subscriptions and interfaces by bringing top AI models into one streamlined environment. Users can compare responses, analyze outputs, and select the best AI model for specific tasks like content creation, coding, data analysis, or research. AskAnyModel empowers individuals and teams to harness AI diversity efficiently, offering advanced tools for prompt testing, model benchmarking, and workflow integration.

Ask Any Model

AskAnyModel is a unified AI interface that allows users to interact with multiple leading AI models — such as GPT, Claude, Gemini, and Mistral — from a single platform. It eliminates the need for multiple subscriptions and interfaces by bringing top AI models into one streamlined environment. Users can compare responses, analyze outputs, and select the best AI model for specific tasks like content creation, coding, data analysis, or research. AskAnyModel empowers individuals and teams to harness AI diversity efficiently, offering advanced tools for prompt testing, model benchmarking, and workflow integration.

Ask Any Model

AskAnyModel is a unified AI interface that allows users to interact with multiple leading AI models — such as GPT, Claude, Gemini, and Mistral — from a single platform. It eliminates the need for multiple subscriptions and interfaces by bringing top AI models into one streamlined environment. Users can compare responses, analyze outputs, and select the best AI model for specific tasks like content creation, coding, data analysis, or research. AskAnyModel empowers individuals and teams to harness AI diversity efficiently, offering advanced tools for prompt testing, model benchmarking, and workflow integration.

LM Studio

LM Studio is a local large language model (LLM) platform that enables users to run and download powerful AI language models like LLaMa, MPT, and Gemma directly on their own computers. This platform supports Mac, Windows, and Linux operating systems, providing flexibility to users across different devices. LM Studio focuses on privacy and control by allowing users to work with AI models locally without relying on cloud-based services, ensuring data stays on the user’s device. It offers an easy-to-install interface with step-by-step guidance for setup, facilitating access to advanced AI capabilities for developers, researchers, and AI enthusiasts without requiring an internet connection.

LM Studio

LM Studio is a local large language model (LLM) platform that enables users to run and download powerful AI language models like LLaMa, MPT, and Gemma directly on their own computers. This platform supports Mac, Windows, and Linux operating systems, providing flexibility to users across different devices. LM Studio focuses on privacy and control by allowing users to work with AI models locally without relying on cloud-based services, ensuring data stays on the user’s device. It offers an easy-to-install interface with step-by-step guidance for setup, facilitating access to advanced AI capabilities for developers, researchers, and AI enthusiasts without requiring an internet connection.

LM Studio

LM Studio is a local large language model (LLM) platform that enables users to run and download powerful AI language models like LLaMa, MPT, and Gemma directly on their own computers. This platform supports Mac, Windows, and Linux operating systems, providing flexibility to users across different devices. LM Studio focuses on privacy and control by allowing users to work with AI models locally without relying on cloud-based services, ensuring data stays on the user’s device. It offers an easy-to-install interface with step-by-step guidance for setup, facilitating access to advanced AI capabilities for developers, researchers, and AI enthusiasts without requiring an internet connection.

Editorial Note

This page was researched and written by the ATB Editorial Team. Our team researches each AI tool by reviewing its official website, testing features, exploring real use cases, and considering user feedback. Every page is fact-checked and regularly updated to ensure the information stays accurate, neutral, and useful for our readers.

If you have any suggestions or questions, email us at hello@aitoolbook.ai