- Enterprise Developers & API Users: Ideal for mission-critical workflows that require speed and scalability.

- Data & Compliance Teams: Use it for rapid multimodal document analysis and routing at scale.

- AI & Agent Builders: Invoke tools like search or code execution with controlled reasoning.

- Localization Teams: Fast translation of text, images, audio, or video content.

- SaaS & High-Volume Clients: Leverage the preview for cost-optimized, real-time classification and summarization pipelines.

How to Use Gemini 2.5 Flash-Lite?

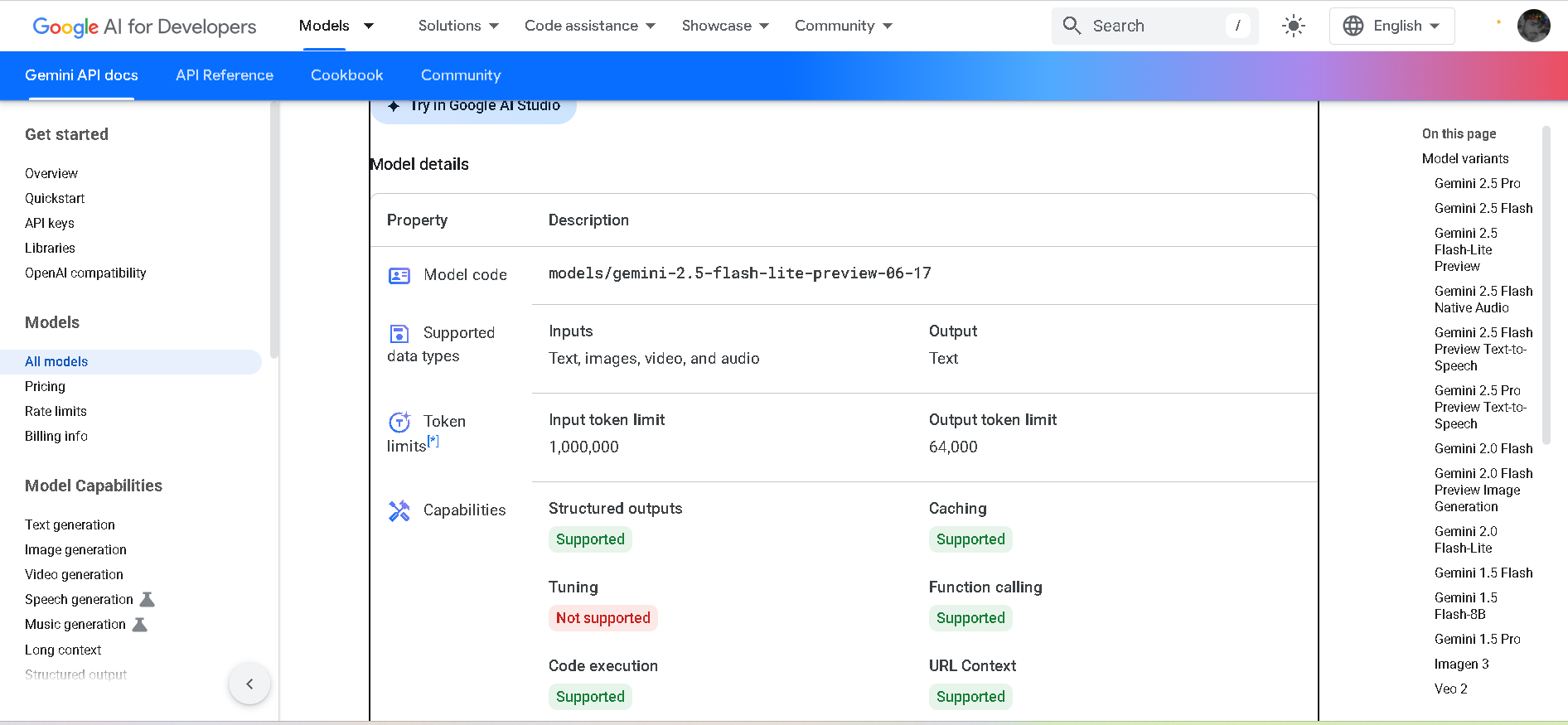

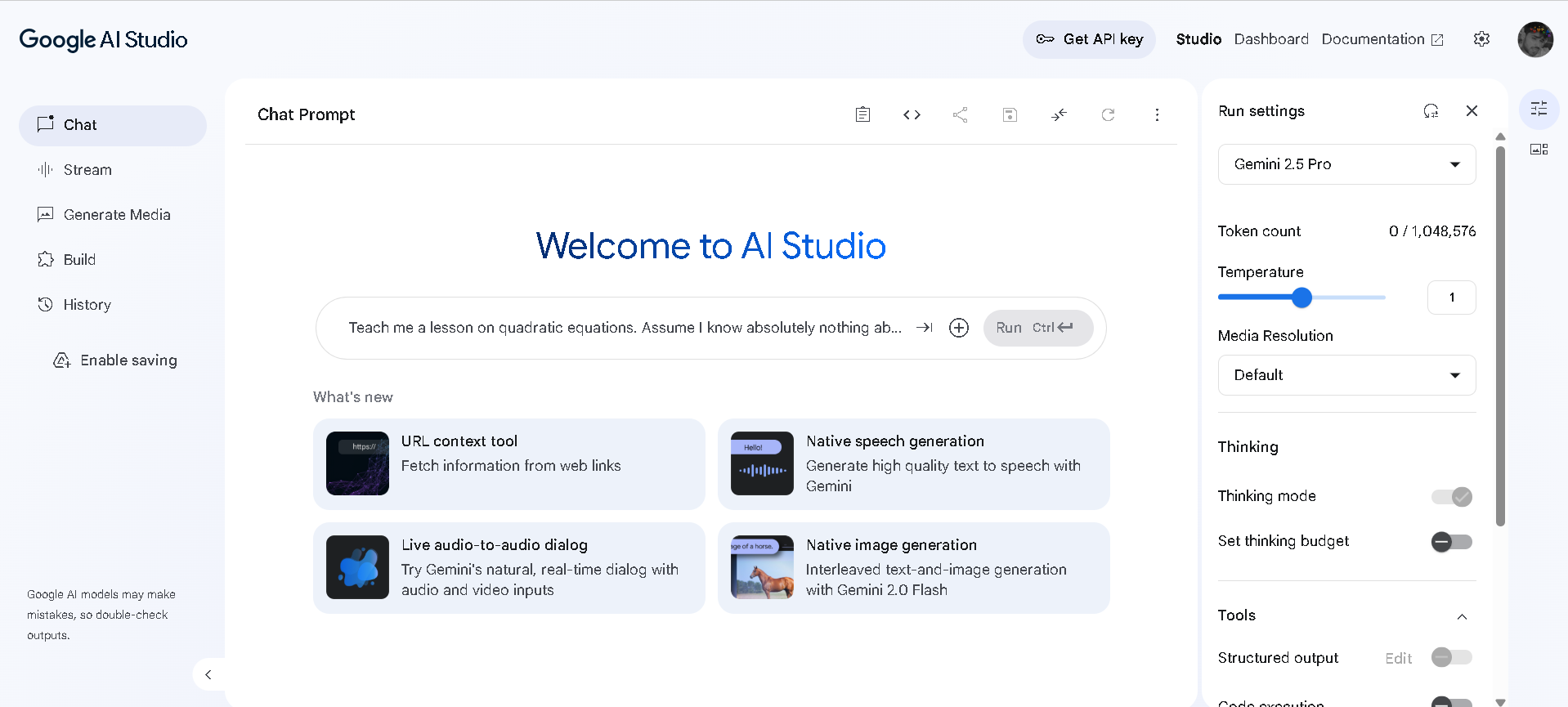

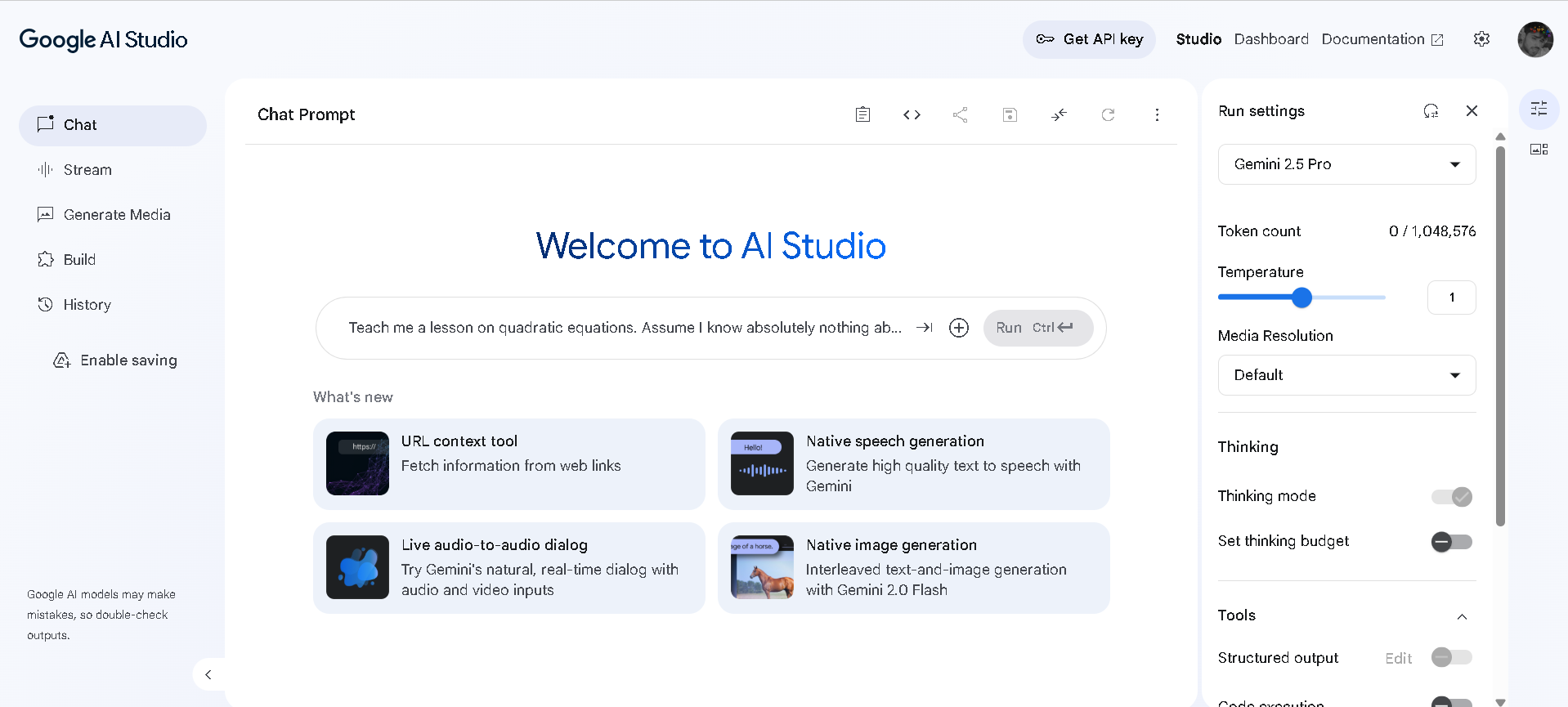

- Access via Google AI Studio or Vertex AI: The preview model `gemini-2.5-flash-lite-preview-06-17` is available now.

- Send Text, Image, Audio, or Video Input: Get rapid text responses optimized for throughput.

- Adjust Thinking Budget: Toggle reasoning on or off to balance cost, latency, and output quality.

- Scale Large-Scale Tasks: Use for classification, summarization, routing, translation—tasks demanding speed and volume.

- Monitor & Tune Usage: Track latency, throughput, and token cost; consider caching or batching to optimize performance.

- Fastest & Cheapest Gemini 2.5 Model: Optimized for high throughput with minimal delay in preview form.

- Thinking Budget Control: Developers choose when the model “thinks,” toggling reasoning for efficiency.

- Massive 1M-Token Context: Handles extensive inputs across text, code, visuals, audio, and video.

- Multimodal Input Support: Accepts diverse formats in a single call—text, images, audio, video.

- Better Than Previous Lite Models: Offers stronger performance across coding, math, reasoning, and vision than older flash versions.

- Unmatched speed and throughput—ideal for enterprise pipelines

- Cost-effective with configurable reasoning

- Huge context window supports long inputs

- Runs multimodal workloads in one model

- Developer-friendly access via API and SDK

- Still in preview—may change before stable release

- Requires developer integration; no UI yet

- Deep thinking may increase cost and latency if not tuned

Free

$ 0.00

Paid

custom

- Input price: 1) $0.10 (text / image / video) 2) $0.50 (audio)

- Context caching: 1) $0.075 (text / image / video) 2) $0.25 (audio)

- Grounding with Google Search: 1,500 RPD (free, limit shared with Flash-Lite RPD), then $35 / 1,000 requests

Proud of the love you're getting? Show off your AI Toolbook reviews—then invite more fans to share the love and build your credibility.

Add an AI Toolbook badge to your site—an easy way to drive followers, showcase updates, and collect reviews. It's like a mini 24/7 billboard for your AI.

Reviews

Rating Distribution

Average score

Popular Mention

FAQs

Similar AI Tools

GPT-4o Mini Realtime Preview is a lightweight, high-speed variant of OpenAI’s flagship multimodal model, GPT-4o. Built for blazing-fast, cost-efficient inference across text, vision, and voice inputs, this preview version is optimized for real-time responsiveness—without compromising on core intelligence. Whether you’re building chatbots, interactive voice tools, or lightweight apps, GPT-4o Mini delivers smart performance with minimal latency and compute load. It’s the perfect choice when you need responsiveness, affordability, and multimodal capabilities all in one efficient package.

OpenAI GPT 4o mini..

GPT-4o Mini Realtime Preview is a lightweight, high-speed variant of OpenAI’s flagship multimodal model, GPT-4o. Built for blazing-fast, cost-efficient inference across text, vision, and voice inputs, this preview version is optimized for real-time responsiveness—without compromising on core intelligence. Whether you’re building chatbots, interactive voice tools, or lightweight apps, GPT-4o Mini delivers smart performance with minimal latency and compute load. It’s the perfect choice when you need responsiveness, affordability, and multimodal capabilities all in one efficient package.

OpenAI GPT 4o mini..

GPT-4o Mini Realtime Preview is a lightweight, high-speed variant of OpenAI’s flagship multimodal model, GPT-4o. Built for blazing-fast, cost-efficient inference across text, vision, and voice inputs, this preview version is optimized for real-time responsiveness—without compromising on core intelligence. Whether you’re building chatbots, interactive voice tools, or lightweight apps, GPT-4o Mini delivers smart performance with minimal latency and compute load. It’s the perfect choice when you need responsiveness, affordability, and multimodal capabilities all in one efficient package.

Gemini 2.5 Pro Pre..

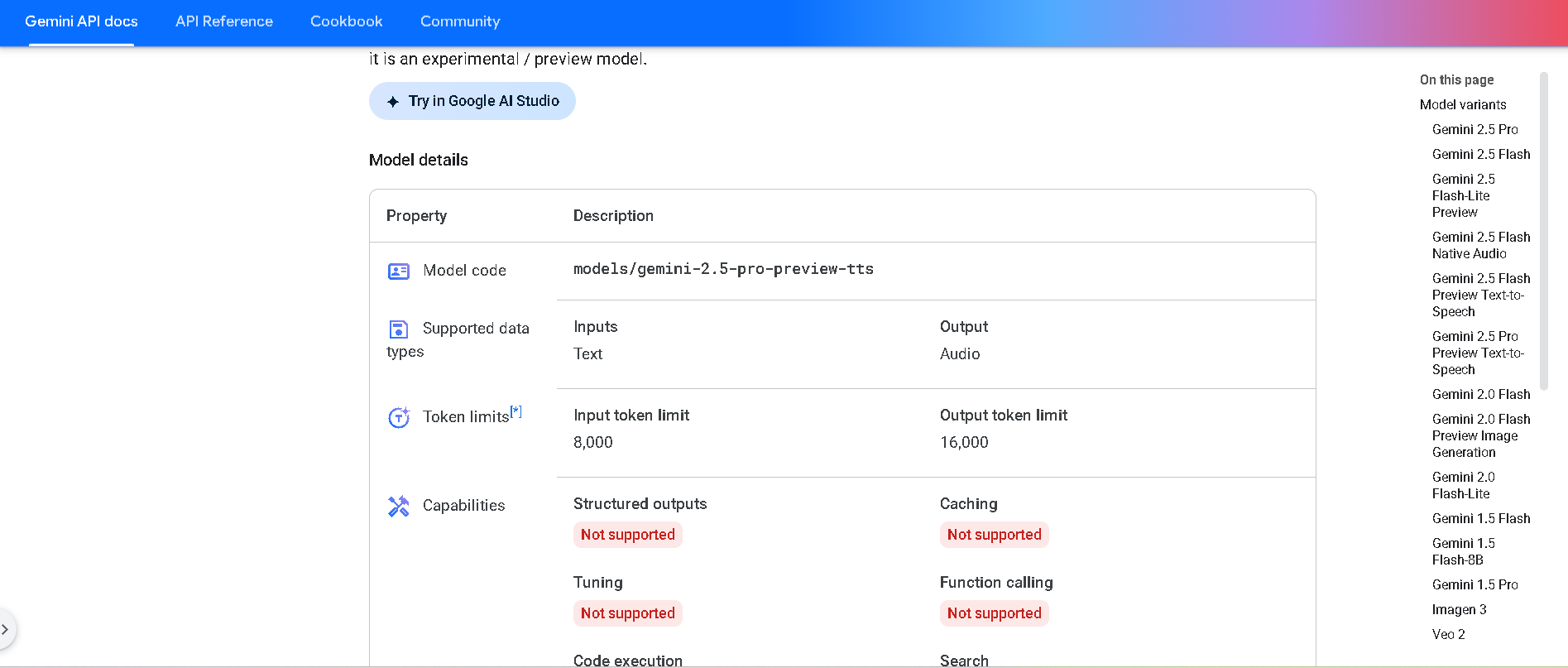

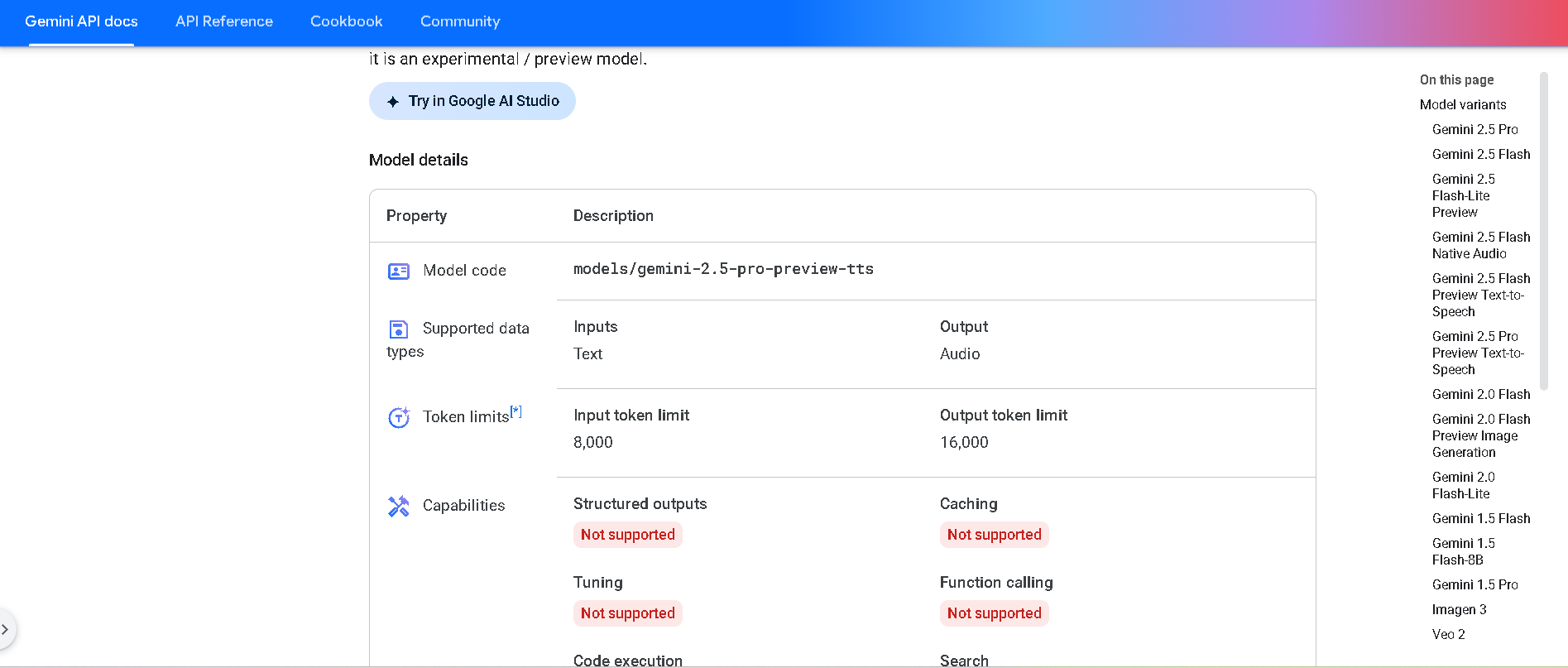

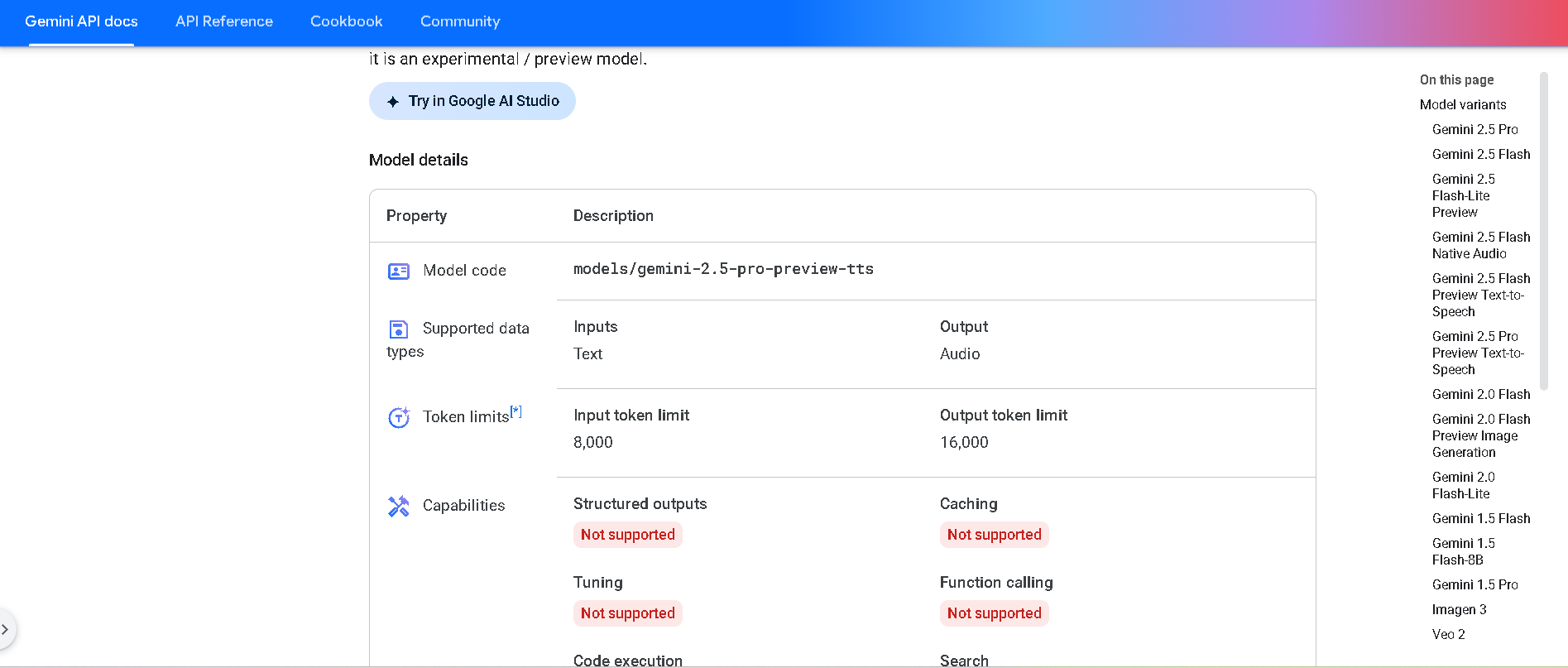

Gemini 2.5 Pro Preview TTS is Google DeepMind’s most powerful text-to-speech model in the Gemini 2.5 series, available in preview. It generates natural-sounding audio—from single-speaker readings to multi-speaker dialogue—while offering fine-grained control over voice style, emotion, pacing, and cadence. Designed for high-fidelity podcasts, audiobooks, and professional voice workflows.

Gemini 2.5 Pro Pre..

Gemini 2.5 Pro Preview TTS is Google DeepMind’s most powerful text-to-speech model in the Gemini 2.5 series, available in preview. It generates natural-sounding audio—from single-speaker readings to multi-speaker dialogue—while offering fine-grained control over voice style, emotion, pacing, and cadence. Designed for high-fidelity podcasts, audiobooks, and professional voice workflows.

Gemini 2.5 Pro Pre..

Gemini 2.5 Pro Preview TTS is Google DeepMind’s most powerful text-to-speech model in the Gemini 2.5 series, available in preview. It generates natural-sounding audio—from single-speaker readings to multi-speaker dialogue—while offering fine-grained control over voice style, emotion, pacing, and cadence. Designed for high-fidelity podcasts, audiobooks, and professional voice workflows.

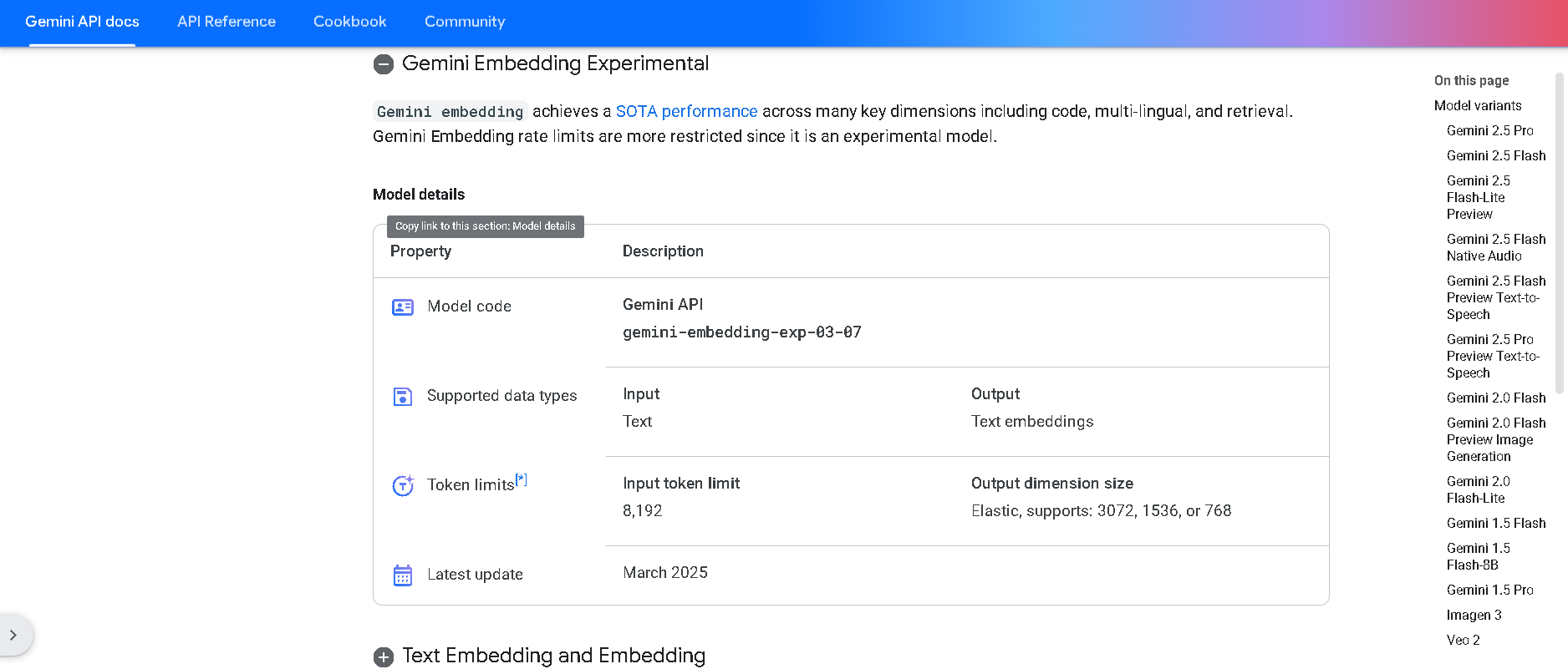

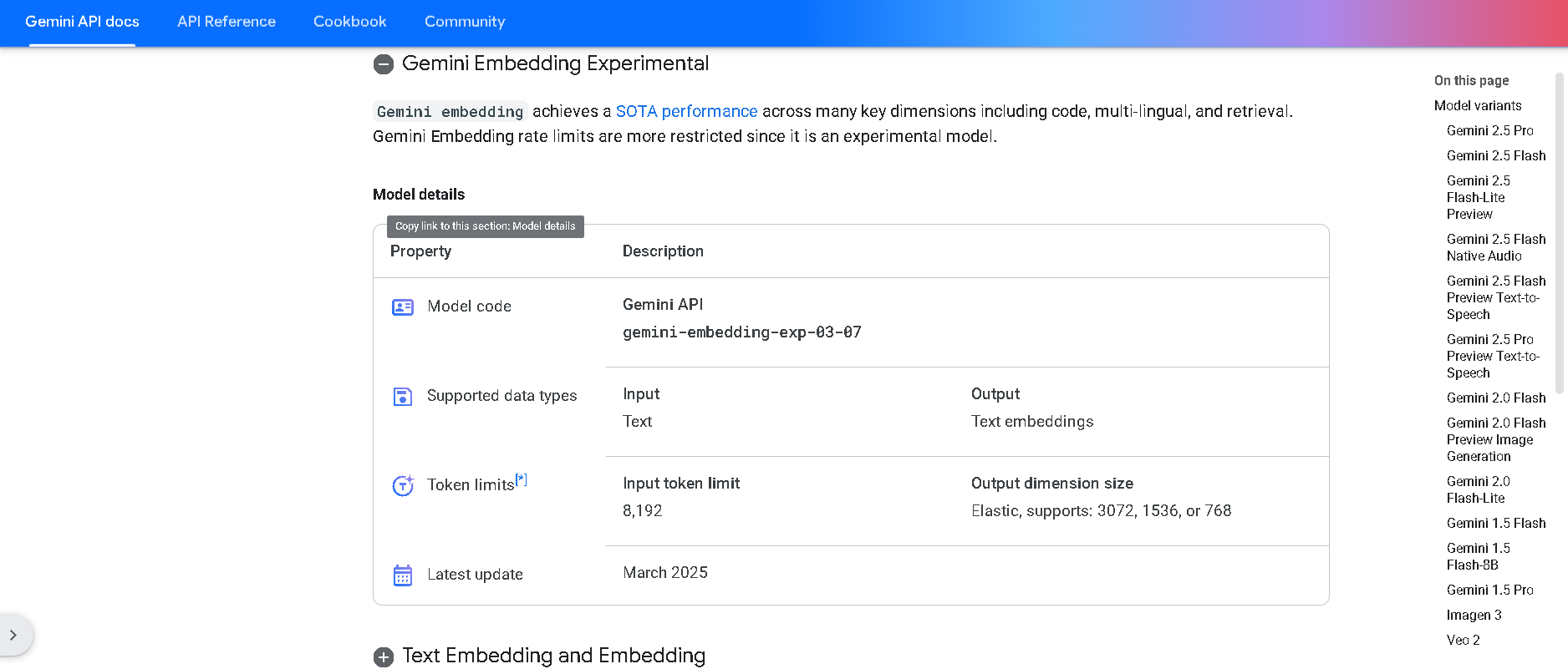

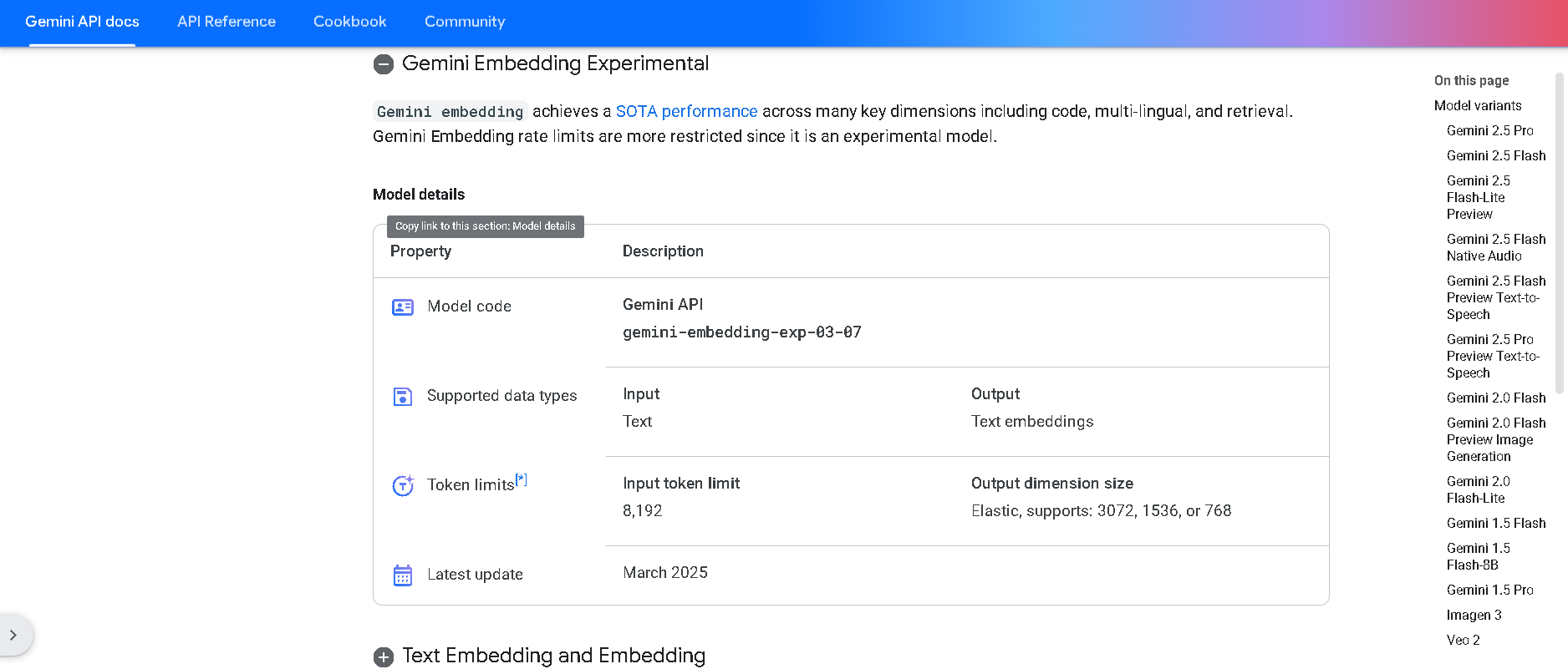

Gemini Embedding

Gemini Embedding is Google DeepMind’s state-of-the-art text embedding model, built on the powerful Gemini family. It transforms text into high-dimensional numerical vectors (up to 3,072 dimensions) with exceptional accuracy and generalization across over 100 languages and multiple modalities—including code. It achieves state-of-the-art results on the Massive Multilingual Text Embedding Benchmark (MMTEB), outperforming prior models across multilingual, English, and code-based tasks

Gemini Embedding

Gemini Embedding is Google DeepMind’s state-of-the-art text embedding model, built on the powerful Gemini family. It transforms text into high-dimensional numerical vectors (up to 3,072 dimensions) with exceptional accuracy and generalization across over 100 languages and multiple modalities—including code. It achieves state-of-the-art results on the Massive Multilingual Text Embedding Benchmark (MMTEB), outperforming prior models across multilingual, English, and code-based tasks

Gemini Embedding

Gemini Embedding is Google DeepMind’s state-of-the-art text embedding model, built on the powerful Gemini family. It transforms text into high-dimensional numerical vectors (up to 3,072 dimensions) with exceptional accuracy and generalization across over 100 languages and multiple modalities—including code. It achieves state-of-the-art results on the Massive Multilingual Text Embedding Benchmark (MMTEB), outperforming prior models across multilingual, English, and code-based tasks

Grok 3 Latest

Grok 3 is xAI’s newest flagship AI chatbot, released on February 17, 2025, running on the massive Colossus supercluster (~200,000 GPUs). It offers elite-level reasoning, chain-of-thought transparency (“Think” mode), advanced “Big Brain” deeper reasoning, multimodal support (text, images), and integrated real-time DeepSearch—positioning it as a top-tier competitor to GPT‑4o, Gemini, Claude, and DeepSeek V3 on benchmarks.

Grok 3 Latest

Grok 3 is xAI’s newest flagship AI chatbot, released on February 17, 2025, running on the massive Colossus supercluster (~200,000 GPUs). It offers elite-level reasoning, chain-of-thought transparency (“Think” mode), advanced “Big Brain” deeper reasoning, multimodal support (text, images), and integrated real-time DeepSearch—positioning it as a top-tier competitor to GPT‑4o, Gemini, Claude, and DeepSeek V3 on benchmarks.

Grok 3 Latest

Grok 3 is xAI’s newest flagship AI chatbot, released on February 17, 2025, running on the massive Colossus supercluster (~200,000 GPUs). It offers elite-level reasoning, chain-of-thought transparency (“Think” mode), advanced “Big Brain” deeper reasoning, multimodal support (text, images), and integrated real-time DeepSearch—positioning it as a top-tier competitor to GPT‑4o, Gemini, Claude, and DeepSeek V3 on benchmarks.

grok-3-fast-latest

Grok 3 Fast is xAI’s speed-optimized variant of their flagship Grok 3 model, offering identical output quality with lower latency. It leverages the same underlying architecture—including multimodal input, chain-of-thought reasoning, and large context—but serves through optimized infrastructure for real-time responsiveness. It supports up to 131,072 tokens of context.

grok-3-fast-latest

Grok 3 Fast is xAI’s speed-optimized variant of their flagship Grok 3 model, offering identical output quality with lower latency. It leverages the same underlying architecture—including multimodal input, chain-of-thought reasoning, and large context—but serves through optimized infrastructure for real-time responsiveness. It supports up to 131,072 tokens of context.

grok-3-fast-latest

Grok 3 Fast is xAI’s speed-optimized variant of their flagship Grok 3 model, offering identical output quality with lower latency. It leverages the same underlying architecture—including multimodal input, chain-of-thought reasoning, and large context—but serves through optimized infrastructure for real-time responsiveness. It supports up to 131,072 tokens of context.

Google Workspace A..

Google Workspace AI (also known as "Gemini in Workspace") is Google’s integrated suite of AI-powered features embedded throughout Gmail, Docs, Sheets, Slides, Meet, Chat, Drive, Vids, Forms, and more. Introduced fully in early 2025, it brings Gemini 2.5 Pro, NotebookLM Plus, agentic workflows via Workspace Flows, and domain-specific video editing to help businesses and educators work smarter and faster.

Google Workspace A..

Google Workspace AI (also known as "Gemini in Workspace") is Google’s integrated suite of AI-powered features embedded throughout Gmail, Docs, Sheets, Slides, Meet, Chat, Drive, Vids, Forms, and more. Introduced fully in early 2025, it brings Gemini 2.5 Pro, NotebookLM Plus, agentic workflows via Workspace Flows, and domain-specific video editing to help businesses and educators work smarter and faster.

Google Workspace A..

Google Workspace AI (also known as "Gemini in Workspace") is Google’s integrated suite of AI-powered features embedded throughout Gmail, Docs, Sheets, Slides, Meet, Chat, Drive, Vids, Forms, and more. Introduced fully in early 2025, it brings Gemini 2.5 Pro, NotebookLM Plus, agentic workflows via Workspace Flows, and domain-specific video editing to help businesses and educators work smarter and faster.

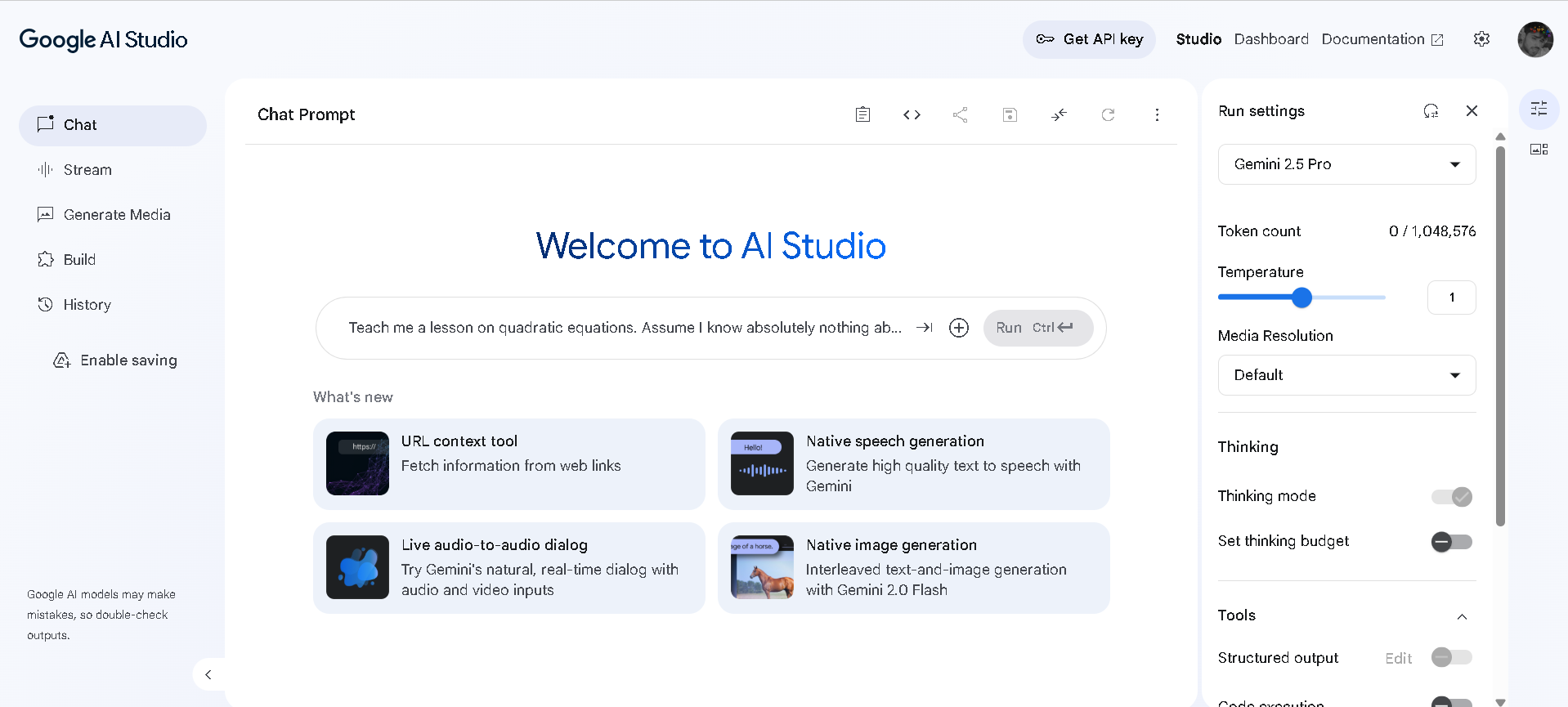

Google AI Studio

Google AI Studio is a web-based development environment that allows users to explore, prototype, and build applications using Google's cutting-edge generative AI models, such as Gemini. It provides a comprehensive set of tools for interacting with AI through chat prompts, generating various media types, and fine-tuning model behaviors for specific use cases.

Google AI Studio

Google AI Studio is a web-based development environment that allows users to explore, prototype, and build applications using Google's cutting-edge generative AI models, such as Gemini. It provides a comprehensive set of tools for interacting with AI through chat prompts, generating various media types, and fine-tuning model behaviors for specific use cases.

Google AI Studio

Google AI Studio is a web-based development environment that allows users to explore, prototype, and build applications using Google's cutting-edge generative AI models, such as Gemini. It provides a comprehensive set of tools for interacting with AI through chat prompts, generating various media types, and fine-tuning model behaviors for specific use cases.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

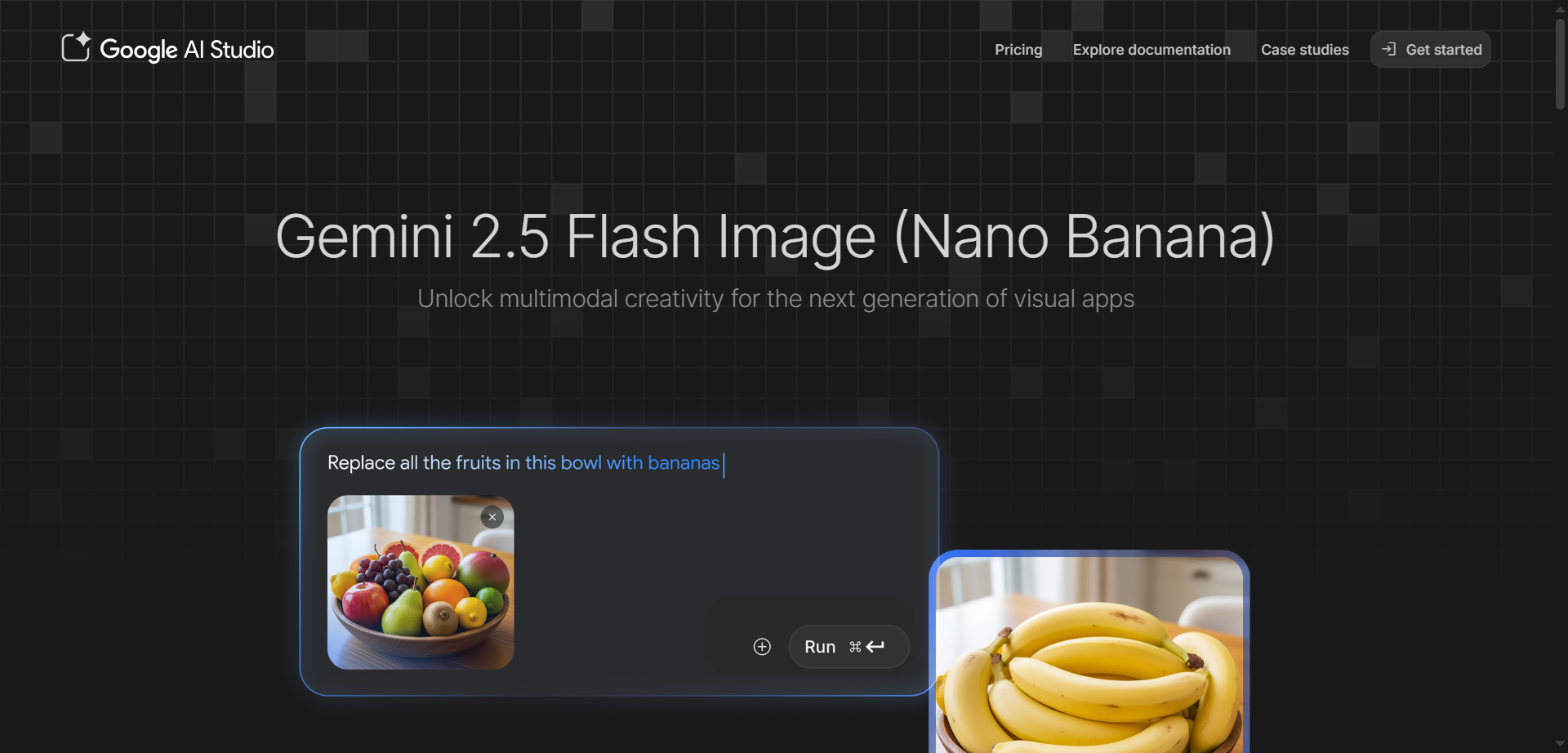

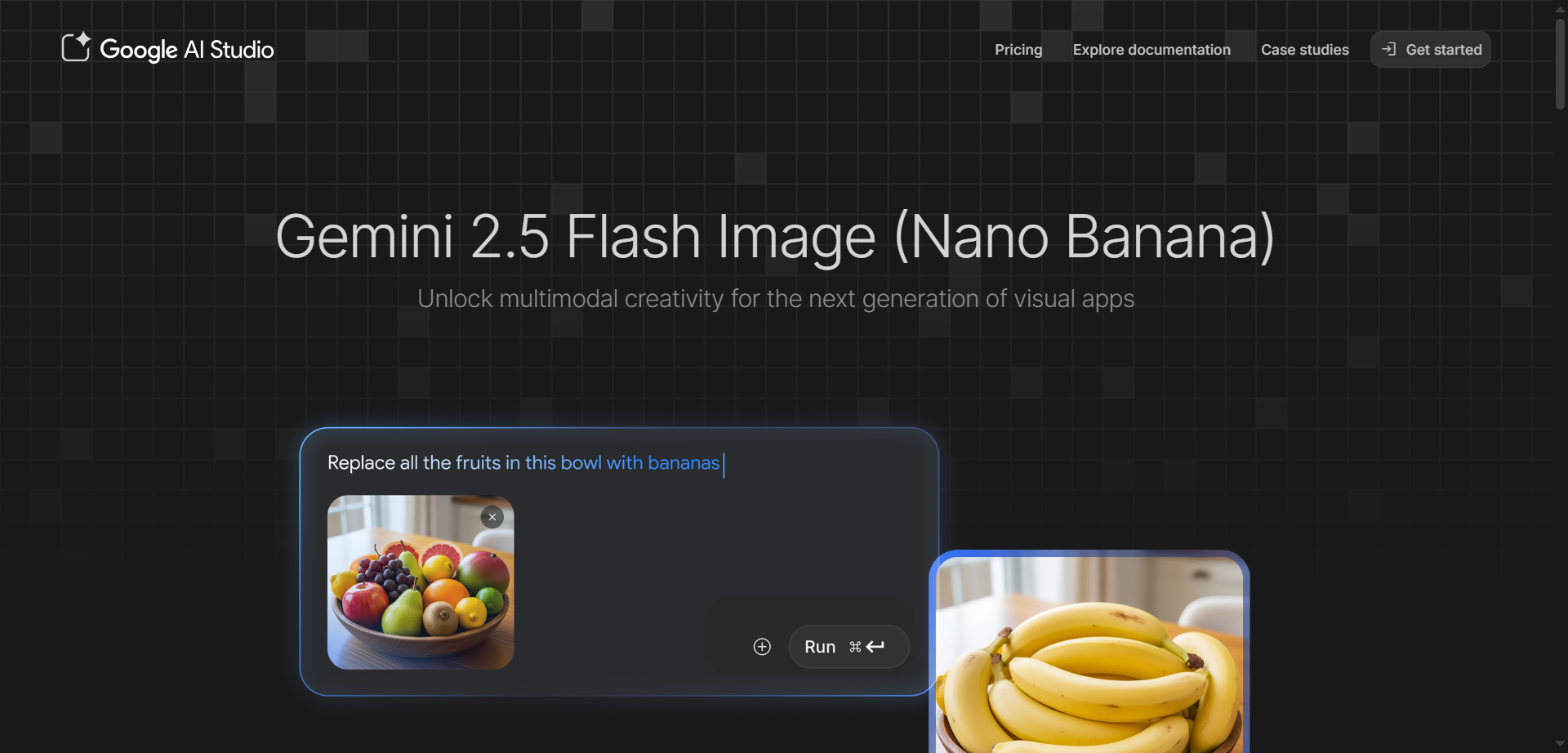

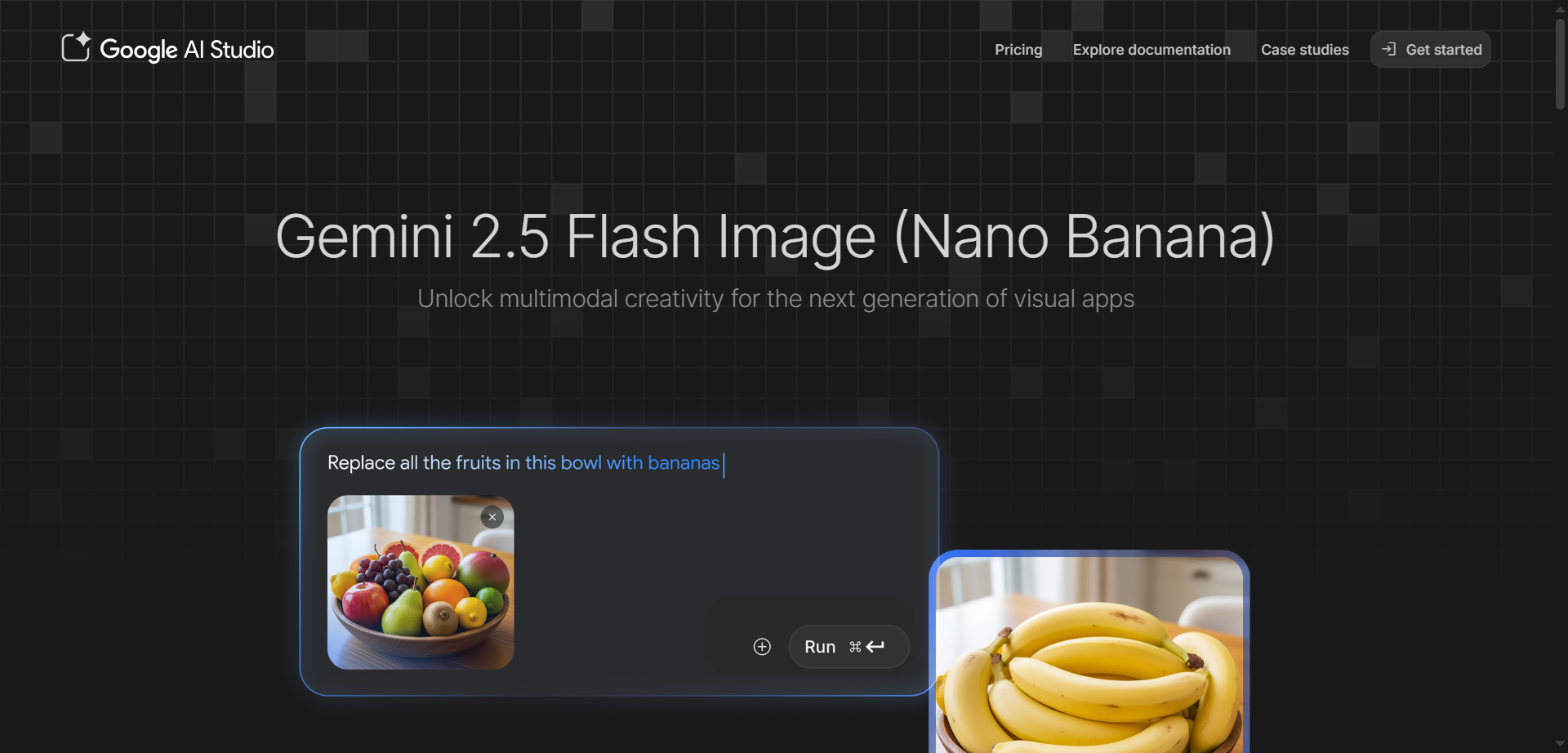

Nano Banana

Gemini 2.5 Flash Image is Google's state-of-the-art AI image generation and editing model, nicknamed Nano Banana, designed for fast, high-quality creative workflows. It excels at blending multiple images into seamless compositions, maintaining character consistency across scenes, and making precise edits through natural language prompts like blurring backgrounds or changing poses. Accessible via Google AI Studio and Gemini API, it leverages Gemini's world knowledge for realistic transformations, style transfers, and conversational refinements without restarting from scratch. Developers love its low latency, token-based pricing at about $0.039 per image, and SynthID watermarking for easy AI identification. Perfect for product mockups, storytelling, education tools, and professional photo editing.

Nano Banana

Gemini 2.5 Flash Image is Google's state-of-the-art AI image generation and editing model, nicknamed Nano Banana, designed for fast, high-quality creative workflows. It excels at blending multiple images into seamless compositions, maintaining character consistency across scenes, and making precise edits through natural language prompts like blurring backgrounds or changing poses. Accessible via Google AI Studio and Gemini API, it leverages Gemini's world knowledge for realistic transformations, style transfers, and conversational refinements without restarting from scratch. Developers love its low latency, token-based pricing at about $0.039 per image, and SynthID watermarking for easy AI identification. Perfect for product mockups, storytelling, education tools, and professional photo editing.

Nano Banana

Gemini 2.5 Flash Image is Google's state-of-the-art AI image generation and editing model, nicknamed Nano Banana, designed for fast, high-quality creative workflows. It excels at blending multiple images into seamless compositions, maintaining character consistency across scenes, and making precise edits through natural language prompts like blurring backgrounds or changing poses. Accessible via Google AI Studio and Gemini API, it leverages Gemini's world knowledge for realistic transformations, style transfers, and conversational refinements without restarting from scratch. Developers love its low latency, token-based pricing at about $0.039 per image, and SynthID watermarking for easy AI identification. Perfect for product mockups, storytelling, education tools, and professional photo editing.

GlobalGPT

GlobalGPT is an all-in-one AI platform that unifies leading models for writing, coding, research, image generation, and video creation—accessible through a single account and subscription. It brings together top models like GPT-5, GPT-4.1, GPT-4o, GPT-o3, Claude Opus 4.1, Claude Sonnet 4, Gemini 2.5 Pro, DeepSeek V3, Unikorn (MJ-like) V7, Flux, Ideogram, and Google Veo 3 so you can chat, draft content, analyze documents, generate images, and produce cinematic videos without switching tools. Designed for over 2 million users worldwide, GlobalGPT also includes advanced research agents powered by GPT-4o and perplexity for deep web analysis, PDF summarization, and market insights, all wrapped in a clean interface with flexible credit-based plans.

GlobalGPT

GlobalGPT is an all-in-one AI platform that unifies leading models for writing, coding, research, image generation, and video creation—accessible through a single account and subscription. It brings together top models like GPT-5, GPT-4.1, GPT-4o, GPT-o3, Claude Opus 4.1, Claude Sonnet 4, Gemini 2.5 Pro, DeepSeek V3, Unikorn (MJ-like) V7, Flux, Ideogram, and Google Veo 3 so you can chat, draft content, analyze documents, generate images, and produce cinematic videos without switching tools. Designed for over 2 million users worldwide, GlobalGPT also includes advanced research agents powered by GPT-4o and perplexity for deep web analysis, PDF summarization, and market insights, all wrapped in a clean interface with flexible credit-based plans.

GlobalGPT

GlobalGPT is an all-in-one AI platform that unifies leading models for writing, coding, research, image generation, and video creation—accessible through a single account and subscription. It brings together top models like GPT-5, GPT-4.1, GPT-4o, GPT-o3, Claude Opus 4.1, Claude Sonnet 4, Gemini 2.5 Pro, DeepSeek V3, Unikorn (MJ-like) V7, Flux, Ideogram, and Google Veo 3 so you can chat, draft content, analyze documents, generate images, and produce cinematic videos without switching tools. Designed for over 2 million users worldwide, GlobalGPT also includes advanced research agents powered by GPT-4o and perplexity for deep web analysis, PDF summarization, and market insights, all wrapped in a clean interface with flexible credit-based plans.

GPT Proto

GPT Proto provides developers with a unified API to access top AI models for text, image, video, and audio generation, offering rock-solid uptime, lightning-fast responses, and the lowest prices without managing multiple keys or platforms. It aggregates leading models like GPT-5 series, Claude Opus/Sonnet/Haiku, Gemini variants, Grok, and specialized tools for creators, with significant discounts such as 40% off market rates on many. From solo projects to enterprise scale, it ensures 95% of requests respond within 20 seconds, half in just 6 seconds, via robust infrastructure and failover. Quick setup lets you sign up, add credits, generate one API key, and integrate seamlessly into apps, agents, or workflows for prototyping or production.

GPT Proto

GPT Proto provides developers with a unified API to access top AI models for text, image, video, and audio generation, offering rock-solid uptime, lightning-fast responses, and the lowest prices without managing multiple keys or platforms. It aggregates leading models like GPT-5 series, Claude Opus/Sonnet/Haiku, Gemini variants, Grok, and specialized tools for creators, with significant discounts such as 40% off market rates on many. From solo projects to enterprise scale, it ensures 95% of requests respond within 20 seconds, half in just 6 seconds, via robust infrastructure and failover. Quick setup lets you sign up, add credits, generate one API key, and integrate seamlessly into apps, agents, or workflows for prototyping or production.

GPT Proto

GPT Proto provides developers with a unified API to access top AI models for text, image, video, and audio generation, offering rock-solid uptime, lightning-fast responses, and the lowest prices without managing multiple keys or platforms. It aggregates leading models like GPT-5 series, Claude Opus/Sonnet/Haiku, Gemini variants, Grok, and specialized tools for creators, with significant discounts such as 40% off market rates on many. From solo projects to enterprise scale, it ensures 95% of requests respond within 20 seconds, half in just 6 seconds, via robust infrastructure and failover. Quick setup lets you sign up, add credits, generate one API key, and integrate seamlessly into apps, agents, or workflows for prototyping or production.

Gemini CLI

Gemini CLI is an open-source AI agent from Google that brings the power of Gemini 3 directly into your terminal, enabling developers to build, debug, and deploy apps with natural language commands. It uses a ReAct loop for complex tasks like querying large codebases, generating apps from images or PDFs, fixing bugs, automating workflows, and handling GitHub issues, all while running locally on Mac, Windows, or Linux. Key features include slash commands for quick actions, custom commands for shortcuts, checkpointing to save sessions, headless mode for scripting, sandboxing for secure tool execution, context files like GEMINI.md for project-specific instructions, token caching to cut costs, and integrations with Google Search, MCP servers for extensions like Veo or Imagen, and IDEs like VS Code. Install via npm for free access to powerful coding assistance, research, content generation, and task management right in your command line.

Gemini CLI

Gemini CLI is an open-source AI agent from Google that brings the power of Gemini 3 directly into your terminal, enabling developers to build, debug, and deploy apps with natural language commands. It uses a ReAct loop for complex tasks like querying large codebases, generating apps from images or PDFs, fixing bugs, automating workflows, and handling GitHub issues, all while running locally on Mac, Windows, or Linux. Key features include slash commands for quick actions, custom commands for shortcuts, checkpointing to save sessions, headless mode for scripting, sandboxing for secure tool execution, context files like GEMINI.md for project-specific instructions, token caching to cut costs, and integrations with Google Search, MCP servers for extensions like Veo or Imagen, and IDEs like VS Code. Install via npm for free access to powerful coding assistance, research, content generation, and task management right in your command line.

Gemini CLI

Gemini CLI is an open-source AI agent from Google that brings the power of Gemini 3 directly into your terminal, enabling developers to build, debug, and deploy apps with natural language commands. It uses a ReAct loop for complex tasks like querying large codebases, generating apps from images or PDFs, fixing bugs, automating workflows, and handling GitHub issues, all while running locally on Mac, Windows, or Linux. Key features include slash commands for quick actions, custom commands for shortcuts, checkpointing to save sessions, headless mode for scripting, sandboxing for secure tool execution, context files like GEMINI.md for project-specific instructions, token caching to cut costs, and integrations with Google Search, MCP servers for extensions like Veo or Imagen, and IDEs like VS Code. Install via npm for free access to powerful coding assistance, research, content generation, and task management right in your command line.

Editorial Note

This page was researched and written by the ATB Editorial Team. Our team researches each AI tool by reviewing its official website, testing features, exploring real use cases, and considering user feedback. Every page is fact-checked and regularly updated to ensure the information stays accurate, neutral, and useful for our readers.

If you have any suggestions or questions, email us at hello@aitoolbook.ai