- AI Developers & Researchers: Integrate Tavily's Search API into their AI applications, chatbots, and autonomous agents to provide real-time web access and context.

- AI Agents: Autonomous AI systems that need to perform web research, gather information, and make informed decisions based on up-to-date data.

- Content Creators & Marketers (using AI): Supercharge reports and marketing content with real-time, comprehensive data, ensuring accuracy and relevance.

- Businesses Building AI Solutions: Leverage Tavily for data enrichment, automating research processes, and empowering chatbots with precise, up-to-date responses.

- Startups & Enterprises: Tavily is built to scale, making it a reliable solution for various usage levels, from new creators to large organizations.

- Purpose-Built for LLMs & AI Agents: Unlike general search engines, Tavily is explicitly designed to optimize search results and content for AI consumption, making it ideal for RAG applications.

- Efficient Information Extraction: It aggregates information from multiple sources (up to 20 sites per call), then uses proprietary AI to score, filter, and rank the most relevant content, reducing the burden of manual scraping and filtering for developers.

- Real-time Web Access with High Rate Limits: Provides fast, reliable access to up-to-date information at scale, which is critical for dynamic AI applications.

- Transparency & Source Attribution: All retrieved information includes citations (URLs), ensuring transparency and allowing AI applications to provide credible, verifiable responses.

- Customizable Search Experience: Offers granular control over search depth, domain targeting, and content parsing, giving developers flexibility to tailor search results.

- Directly Addresses LLM Hallucinations: By providing accurate, real-time, and context-optimized information, it significantly helps reduce the problem of AI "making things up."

- Developer-Friendly: Simple API setup, clear documentation, and support for popular programming languages make it easy for AI developers to integrate.

- Comprehensive Web Data Solution: Beyond just search, the Extract and Crawl APIs offer powerful capabilities for deep web content acquisition.

- Scalability & Reliability: Built for high-volume workloads and offers enterprise-grade security (SOC 2 certified, zero data retention).

- Transparency through Citations: The inclusion of source URLs is excellent for building trustworthy AI applications.

- Focus on Relevance: The AI-powered filtering and ranking of content snippets ensure that LLMs receive the most pertinent information.

- API Key Requirement: While a free tier exists, a core functionality requires an API key, which might deter casual users not building AI applications.

- Cost for High Usage: Beyond the free credits, usage is credit-based, which can become costly for extensive or complex research tasks.

- Not a Direct User-Facing Tool: It's primarily a backend service for AI developers, not an end-user search engine.

- Reliance on External Models: While it enhances LLMs, its utility is tied to the performance and capabilities of the LLM/AI agent it's integrated with.

Researcher

$ 0.00

No credit card required

Email support

Pay As You Go

$0.008/ Credit

Cancel anytime

Email support

Project

$ 30.00

Higher rate limits

Email support

Enterprise

Custom

Custom rate limits

Enterprise-Grade support and SLAs

Enterprise-Grade security and privacy

Custom seats

Proud of the love you're getting? Show off your AI Toolbook reviews—then invite more fans to share the love and build your credibility.

Add an AI Toolbook badge to your site—an easy way to drive followers, showcase updates, and collect reviews. It's like a mini 24/7 billboard for your AI.

Reviews

Rating Distribution

Average score

Popular Mention

FAQs

Similar AI Tools

jina

Jina AI is a Berlin-based software company that provides a "search foundation" platform, offering various AI-powered tools designed to help developers build the next generation of search applications for unstructured data. Its mission is to enable businesses to create reliable and high-quality Generative AI (GenAI) and multimodal search applications by combining Embeddings, Rerankers, and Small Language Models (SLMs). Jina AI's tools are designed to provide real-time, accurate, and unbiased information, optimized for LLMs and AI agents.

jina

Jina AI is a Berlin-based software company that provides a "search foundation" platform, offering various AI-powered tools designed to help developers build the next generation of search applications for unstructured data. Its mission is to enable businesses to create reliable and high-quality Generative AI (GenAI) and multimodal search applications by combining Embeddings, Rerankers, and Small Language Models (SLMs). Jina AI's tools are designed to provide real-time, accurate, and unbiased information, optimized for LLMs and AI agents.

jina

Jina AI is a Berlin-based software company that provides a "search foundation" platform, offering various AI-powered tools designed to help developers build the next generation of search applications for unstructured data. Its mission is to enable businesses to create reliable and high-quality Generative AI (GenAI) and multimodal search applications by combining Embeddings, Rerankers, and Small Language Models (SLMs). Jina AI's tools are designed to provide real-time, accurate, and unbiased information, optimized for LLMs and AI agents.

LangChain AI

LangChain AI Local Deep Researcher is an autonomous, fully local web research assistant designed to conduct in-depth research on user-provided topics. It leverages local Large Language Models (LLMs) hosted by Ollama or LM Studio to iteratively generate search queries, summarize findings from web sources, and refine its understanding by identifying and addressing knowledge gaps. The final output is a comprehensive markdown report with citations to all sources.

LangChain AI

LangChain AI Local Deep Researcher is an autonomous, fully local web research assistant designed to conduct in-depth research on user-provided topics. It leverages local Large Language Models (LLMs) hosted by Ollama or LM Studio to iteratively generate search queries, summarize findings from web sources, and refine its understanding by identifying and addressing knowledge gaps. The final output is a comprehensive markdown report with citations to all sources.

LangChain AI

LangChain AI Local Deep Researcher is an autonomous, fully local web research assistant designed to conduct in-depth research on user-provided topics. It leverages local Large Language Models (LLMs) hosted by Ollama or LM Studio to iteratively generate search queries, summarize findings from web sources, and refine its understanding by identifying and addressing knowledge gaps. The final output is a comprehensive markdown report with citations to all sources.

GPT-4o Search Preview is a powerful experimental feature of OpenAI’s GPT-4o model, designed to act as a high-performance retrieval system. Rather than just generating answers from training data, it allows the model to search through large datasets, documents, or knowledge bases to surface relevant results with context-aware accuracy. Think of it as your AI assistant with built-in research superpowers—faster, smarter, and surprisingly precise. This preview gives developers a taste of what’s coming next: an intelligent search engine built directly into the GPT-4o ecosystem.

OpenAI GPT 4o Sear..

GPT-4o Search Preview is a powerful experimental feature of OpenAI’s GPT-4o model, designed to act as a high-performance retrieval system. Rather than just generating answers from training data, it allows the model to search through large datasets, documents, or knowledge bases to surface relevant results with context-aware accuracy. Think of it as your AI assistant with built-in research superpowers—faster, smarter, and surprisingly precise. This preview gives developers a taste of what’s coming next: an intelligent search engine built directly into the GPT-4o ecosystem.

OpenAI GPT 4o Sear..

GPT-4o Search Preview is a powerful experimental feature of OpenAI’s GPT-4o model, designed to act as a high-performance retrieval system. Rather than just generating answers from training data, it allows the model to search through large datasets, documents, or knowledge bases to surface relevant results with context-aware accuracy. Think of it as your AI assistant with built-in research superpowers—faster, smarter, and surprisingly precise. This preview gives developers a taste of what’s coming next: an intelligent search engine built directly into the GPT-4o ecosystem.

Perplexity AI

Perplexity AI is a powerful AI‑powered answer engine and search assistant launched in December 2022. It combines real‑time web search with large language models (like GPT‑4.1, Claude 4, Sonar), delivering direct answers with in‑text citations and multi‑turn conversational context.

Perplexity AI

Perplexity AI is a powerful AI‑powered answer engine and search assistant launched in December 2022. It combines real‑time web search with large language models (like GPT‑4.1, Claude 4, Sonar), delivering direct answers with in‑text citations and multi‑turn conversational context.

Perplexity AI

Perplexity AI is a powerful AI‑powered answer engine and search assistant launched in December 2022. It combines real‑time web search with large language models (like GPT‑4.1, Claude 4, Sonar), delivering direct answers with in‑text citations and multi‑turn conversational context.

Teammately

Teammately.ai is an AI agent specifically designed for AI engineers to streamline and accelerate the development of robust, production-level AI applications. Its primary purpose is to automate various critical stages of the AI development lifecycle, from prompt generation and self-refinement to comprehensive evaluation, efficient RAG (Retrieval Augmented Generation) building, and interpretable observability, ensuring AI solutions are robust and less prone to failure.

Teammately

Teammately.ai is an AI agent specifically designed for AI engineers to streamline and accelerate the development of robust, production-level AI applications. Its primary purpose is to automate various critical stages of the AI development lifecycle, from prompt generation and self-refinement to comprehensive evaluation, efficient RAG (Retrieval Augmented Generation) building, and interpretable observability, ensuring AI solutions are robust and less prone to failure.

Teammately

Teammately.ai is an AI agent specifically designed for AI engineers to streamline and accelerate the development of robust, production-level AI applications. Its primary purpose is to automate various critical stages of the AI development lifecycle, from prompt generation and self-refinement to comprehensive evaluation, efficient RAG (Retrieval Augmented Generation) building, and interpretable observability, ensuring AI solutions are robust and less prone to failure.

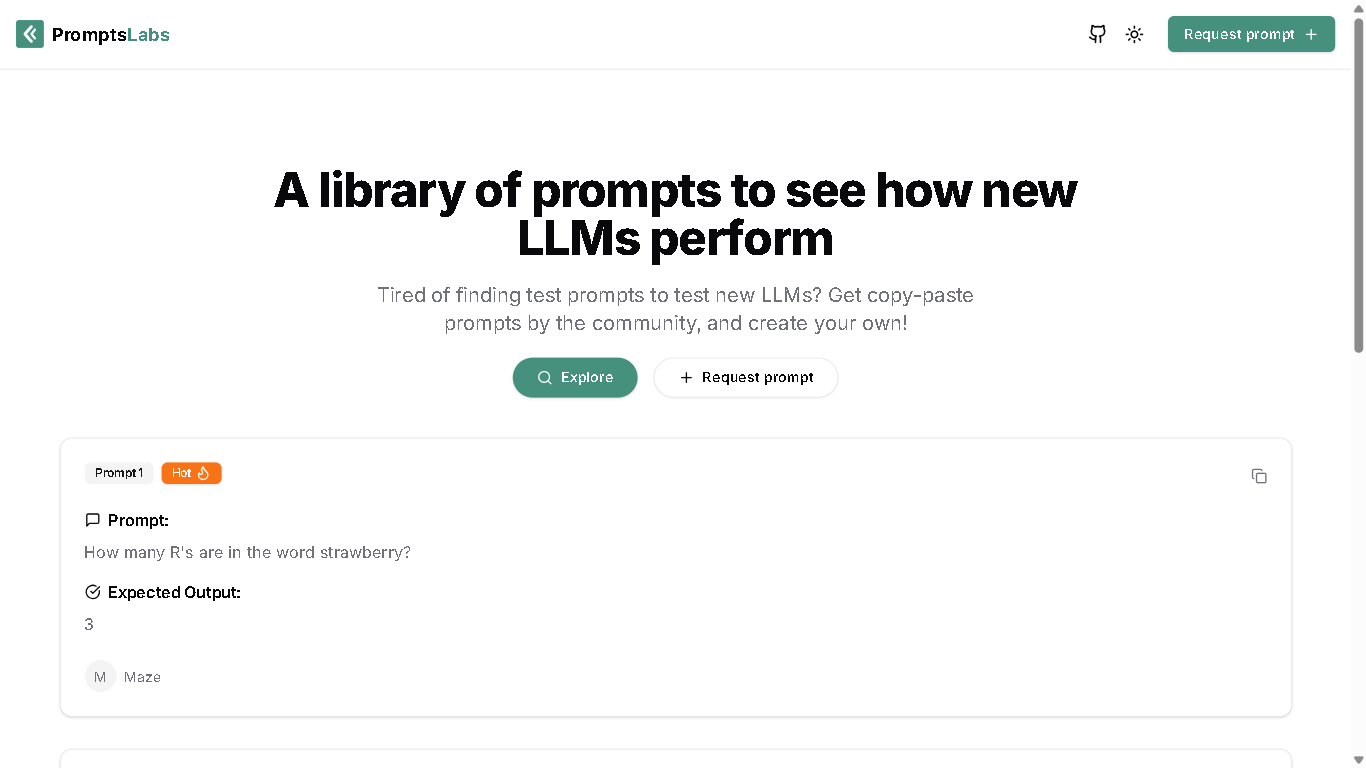

PromptsLabs

PromptsLabs is an open-source library of curated prompts designed to test and evaluate the performance of large language models (LLMs). It allows users to explore, contribute, and request prompts to better understand LLM capabilities.

PromptsLabs

PromptsLabs is an open-source library of curated prompts designed to test and evaluate the performance of large language models (LLMs). It allows users to explore, contribute, and request prompts to better understand LLM capabilities.

PromptsLabs

PromptsLabs is an open-source library of curated prompts designed to test and evaluate the performance of large language models (LLMs). It allows users to explore, contribute, and request prompts to better understand LLM capabilities.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

ChatBetter

ChatBetter is an AI platform designed to unify access to all major large language models (LLMs) within a single chat interface. Built for productivity and accuracy, ChatBetter leverages automatic model selection to route every query to the most capable AI—eliminating guesswork about which model to use. Users can directly compare responses from OpenAI, Anthropic, Google, Meta, DeepSeek, Perplexity, Mistral, xAI, and Cohere models side by side, or merge answers for comprehensive insights. The system is crafted for teams and individuals alike, enabling complex research, planning, and writing tasks to be accomplished efficiently in one place.

Haystack by Deepse..

Haystack is an open-source framework developed by deepset for building production-ready search and question-answering systems powered by language models. It enables developers to connect LLMs with structured and unstructured data sources, perform retrieval-augmented generation, and create semantic search pipelines. Haystack provides flexibility to integrate various retrievers, readers, and document stores like Elasticsearch, FAISS, and Pinecone. It’s widely used for enterprise document Q&A, chatbots, and knowledge management systems, helping teams deploy scalable, high-performance AI-powered search.

Haystack by Deepse..

Haystack is an open-source framework developed by deepset for building production-ready search and question-answering systems powered by language models. It enables developers to connect LLMs with structured and unstructured data sources, perform retrieval-augmented generation, and create semantic search pipelines. Haystack provides flexibility to integrate various retrievers, readers, and document stores like Elasticsearch, FAISS, and Pinecone. It’s widely used for enterprise document Q&A, chatbots, and knowledge management systems, helping teams deploy scalable, high-performance AI-powered search.

Haystack by Deepse..

Haystack is an open-source framework developed by deepset for building production-ready search and question-answering systems powered by language models. It enables developers to connect LLMs with structured and unstructured data sources, perform retrieval-augmented generation, and create semantic search pipelines. Haystack provides flexibility to integrate various retrievers, readers, and document stores like Elasticsearch, FAISS, and Pinecone. It’s widely used for enterprise document Q&A, chatbots, and knowledge management systems, helping teams deploy scalable, high-performance AI-powered search.

LLM Chat

LLMChat is a privacy-focused, open-source AI chatbot platform designed for advanced research, agentic workflows, and seamless interaction with multiple large language models (LLMs). It offers users a minimalistic and intuitive interface enabling deep exploration of complex topics with modes like Deep Research and Pro Search, which incorporates real-time web integration for current data. The platform emphasizes user privacy by storing all chat history locally in the browser, ensuring conversations never leave the device. LLMChat supports many popular LLM providers such as OpenAI, Anthropic, Google, and more, allowing users to customize AI assistants with personalized instructions and knowledge bases for a wide variety of applications ranging from research to content generation and coding assistance.

LLM Chat

LLMChat is a privacy-focused, open-source AI chatbot platform designed for advanced research, agentic workflows, and seamless interaction with multiple large language models (LLMs). It offers users a minimalistic and intuitive interface enabling deep exploration of complex topics with modes like Deep Research and Pro Search, which incorporates real-time web integration for current data. The platform emphasizes user privacy by storing all chat history locally in the browser, ensuring conversations never leave the device. LLMChat supports many popular LLM providers such as OpenAI, Anthropic, Google, and more, allowing users to customize AI assistants with personalized instructions and knowledge bases for a wide variety of applications ranging from research to content generation and coding assistance.

LLM Chat

LLMChat is a privacy-focused, open-source AI chatbot platform designed for advanced research, agentic workflows, and seamless interaction with multiple large language models (LLMs). It offers users a minimalistic and intuitive interface enabling deep exploration of complex topics with modes like Deep Research and Pro Search, which incorporates real-time web integration for current data. The platform emphasizes user privacy by storing all chat history locally in the browser, ensuring conversations never leave the device. LLMChat supports many popular LLM providers such as OpenAI, Anthropic, Google, and more, allowing users to customize AI assistants with personalized instructions and knowledge bases for a wide variety of applications ranging from research to content generation and coding assistance.

LLM as-a-service

LLM.co LLM-as-a-Service (LLMaaS) is a secure, enterprise-grade AI platform that provides private and fully managed large language model deployments tailored to an organization’s specific industry, workflows, and data. Unlike public LLM APIs, each client receives a dedicated, single-tenant model hosted in private clouds or virtual private clouds (VPCs), ensuring complete data privacy and compliance. The platform offers model fine-tuning on proprietary internal documents, semantic search, multi-document Q&A, custom AI agents, contract review, and offline AI capabilities for regulated industries. It removes infrastructure burdens by handling deployment, scaling, and monitoring, while enabling businesses to customize models for domain-specific language, regulatory compliance, and unique operational needs.

LLM as-a-service

LLM.co LLM-as-a-Service (LLMaaS) is a secure, enterprise-grade AI platform that provides private and fully managed large language model deployments tailored to an organization’s specific industry, workflows, and data. Unlike public LLM APIs, each client receives a dedicated, single-tenant model hosted in private clouds or virtual private clouds (VPCs), ensuring complete data privacy and compliance. The platform offers model fine-tuning on proprietary internal documents, semantic search, multi-document Q&A, custom AI agents, contract review, and offline AI capabilities for regulated industries. It removes infrastructure burdens by handling deployment, scaling, and monitoring, while enabling businesses to customize models for domain-specific language, regulatory compliance, and unique operational needs.

LLM as-a-service

LLM.co LLM-as-a-Service (LLMaaS) is a secure, enterprise-grade AI platform that provides private and fully managed large language model deployments tailored to an organization’s specific industry, workflows, and data. Unlike public LLM APIs, each client receives a dedicated, single-tenant model hosted in private clouds or virtual private clouds (VPCs), ensuring complete data privacy and compliance. The platform offers model fine-tuning on proprietary internal documents, semantic search, multi-document Q&A, custom AI agents, contract review, and offline AI capabilities for regulated industries. It removes infrastructure burdens by handling deployment, scaling, and monitoring, while enabling businesses to customize models for domain-specific language, regulatory compliance, and unique operational needs.

Ask AI

Ask AI is a free AI answer engine that lets you ask questions in natural language and receive instant, accurate, and factual answers, positioning itself as an alternative to traditional search engines and tools like ChatGPT. It focuses on helping users move quickly from question to solution with clear, concise responses, and can also summarize long web pages into easy-to-read bullet points, create images from simple text prompts, and check grammar with one click. With over 500 million searches processed and more than 1.4 million searches made daily, iAsk is built to accelerate research, improve learning, and save up to 80% of the time typically spent hunting for information. Its Pro tier adds advanced capabilities powered by benchmark-leading models.

Ask AI

Ask AI is a free AI answer engine that lets you ask questions in natural language and receive instant, accurate, and factual answers, positioning itself as an alternative to traditional search engines and tools like ChatGPT. It focuses on helping users move quickly from question to solution with clear, concise responses, and can also summarize long web pages into easy-to-read bullet points, create images from simple text prompts, and check grammar with one click. With over 500 million searches processed and more than 1.4 million searches made daily, iAsk is built to accelerate research, improve learning, and save up to 80% of the time typically spent hunting for information. Its Pro tier adds advanced capabilities powered by benchmark-leading models.

Ask AI

Ask AI is a free AI answer engine that lets you ask questions in natural language and receive instant, accurate, and factual answers, positioning itself as an alternative to traditional search engines and tools like ChatGPT. It focuses on helping users move quickly from question to solution with clear, concise responses, and can also summarize long web pages into easy-to-read bullet points, create images from simple text prompts, and check grammar with one click. With over 500 million searches processed and more than 1.4 million searches made daily, iAsk is built to accelerate research, improve learning, and save up to 80% of the time typically spent hunting for information. Its Pro tier adds advanced capabilities powered by benchmark-leading models.

Andi Search

Andi Search is an AI-powered conversational search engine designed to deliver direct, well-researched answers instead of traditional link-based results. It helps users explore the web through natural language queries, combining real-time information, semantic understanding, and generative AI to provide clear, concise, and ad-free responses.

Andi Search

Andi Search is an AI-powered conversational search engine designed to deliver direct, well-researched answers instead of traditional link-based results. It helps users explore the web through natural language queries, combining real-time information, semantic understanding, and generative AI to provide clear, concise, and ad-free responses.

Andi Search

Andi Search is an AI-powered conversational search engine designed to deliver direct, well-researched answers instead of traditional link-based results. It helps users explore the web through natural language queries, combining real-time information, semantic understanding, and generative AI to provide clear, concise, and ad-free responses.

Editorial Note

This page was researched and written by the ATB Editorial Team. Our team researches each AI tool by reviewing its official website, testing features, exploring real use cases, and considering user feedback. Every page is fact-checked and regularly updated to ensure the information stays accurate, neutral, and useful for our readers.

If you have any suggestions or questions, email us at hello@aitoolbook.ai